Once again our gracious sponsor Enea hosted an embedded hacking day arranged through foss-sthlm – the third time in three years at the same place with the same host. Fifty something happy hackers brought their boards, devices, screens, laptops and way too many cables to the place on a Saturday to spend it in the name of embedded systems. With no admission fee at all. Just bring your stuff, your skills and enjoy the day.

This happened on May 24th and on the outside of the windows we could identify one of the warmest and nicest spring/summer days so far this year in Stockholm. But hey, if you want to get some fun hacks done we mustn’t let those real-world things hamper us!

All attendees were given a tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…

tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…

We also collected random fun hardware pieces donated to us by various people for a hardware raffle. More about that further down.

Talk

To spice up the day of hacking, we offered some talks. First out was…

Bluetooth and Low Power radio by Mats Karlsson and during this session we got to learn a bunch about hacking extremely low power devices and doing radio for them with Arduinos and more.

We hadn’t much more than started but the clock showed lunch time and we were served lunch!

Contest

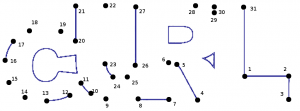

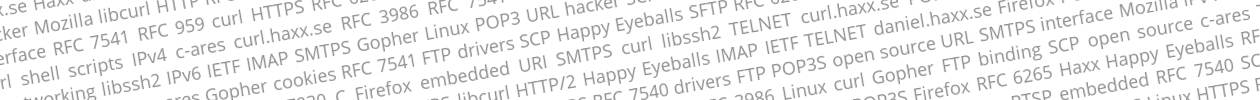

Readers of my blog and previous attendees of any of the embedded hacking days I’ve been organizing should be familiar with the embedded Linux contests I’ve made.

Lately I’ve added a new twist to my setup and I tested it previously when I visited foss-gbg and ran a contest there.

Basically it is a complicated maze/track that you walk through by answering questions, and when you reach the goal you have collected a set of words along the way. Those words should then be rearranged to form a question and that final question should be answered as fast as possible.

Since I already blogged and publicized my previous “Parallell Spaghetti Decode” contest I of course had a new map this time and I altered the set questions a bit as well, even if participants in the latter can find similarities in the previous one. This kind of contest is a bit complicated so for this I hand out the play field and the questions on two pieces of paper to each participant.

After only a little over seven minutes we had a winner, Yann Vernier, who could walk home with a brand new Nexus 7 32GB. The prize was, as so much else this day, donated by Enea.

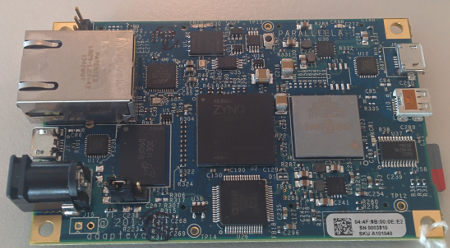

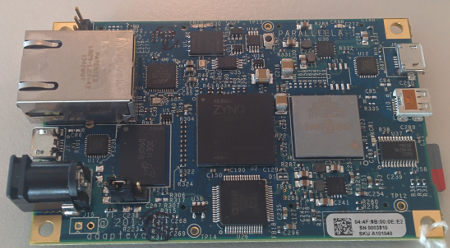

Hardware Raffle

I donated my Arduino Nano (never even unpacked) and a Raspberry Pi with SD-card that I never use. Enea pooled in with a Parallella board and we got an RFduino and a Texas Instrument Stellaris ARM-based little round robot. The Parallella board was the by far most popular device (as expected really) with the Stellaris board as second. Only 12 people signed up for the Rpi…

Everyone who was interested in one of the 5 devices signed up on a list, marking each thing of interest.

.

We then put little pieces of paper with numbers on them in a big bowl and I got to draw 5 numbers (representing different individuals) who then won the devices. It of course turned out we did it in a complicated way that I had did some minor mistakes in to add to the fun. In the end I believe it was at least a fair process that didn’t give any favors or weight in any particular way. I believe we got 5 happy winners.

Workshop

“How to select hardware” was the name of the workshop I lead. Basically it was a one hour group discussion around how to buy, find, order, deal with, not do, avoid when looking for hardware for your (hobby) project. Discussions around brands, companies, sites, buying from China or Ebay, reading reviews, writing reviews, how people do when they buy things when building stuff of their own.

I think we had some good talks and lots of people shared their experiences, stories and some horror stories. Hopefully we all brought a little something with us back from that…

After that we refilled our coffee mugs and indulged in the huge and tasty muffins that magically had appeared.

Something we had learned from previous events was to not “pack” too many talks and other things during the day but to also allow everyone to really spend time on getting things done and to just stroll around and talk with others.

Instead of a third slot for a talk or another workshop we had a little wishlist in our wiki for the day, and as a result I managed to bully Björn Stenberg to the room where he then described his automated system for his warming cupboard (värmeskÃ¥p) which basically is a place to dry clothes. Björn has perfected his cupboard’s ability to dry clothes and also shut off/tell him when they are dry and not waste energy by keep on warming using damp sensors, Arduinos and more.

With that we were in the final sprint for the day. The last commits were made. The final bragging comments described blinking leds. Cables were detached. Bags were filled will electronics. People started to drop off.

Only a few brave souls stayed to the very end. And they celebrated in style.

I had a great day, and I received several positive comments and feedback from participants. I hope we’ll run a similar event again soon, it’d be great to keep this an annual tradition.

The pictures in this blog post are taken by: me, Jon Aldama, Annica Spangholt, Magnus Sandberg and Jämtlands bryggerier. Thanks! More pictures can also be found in Enea’s blog posting and in the Google+ event.

Thanks also to Jon, Annica and Sofie for the hard hosting work during the event. It made everything run smooth and without any bumps!

I ripped the thing out, booted up again and I could still work since my source code and OS are on the SSD. I ordered a new one at once. Phew.

I ripped the thing out, booted up again and I could still work since my source code and OS are on the SSD. I ordered a new one at once. Phew.

tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…

tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…