Everyone needs something fun to do in their spare time. And digging deep into curl internals is mighty fun!

One of the things I do in curl every now and then is to run a few typical command lines and count how much memory is allocated and how many memory allocation calls that are made. This is good project hygiene and is a basic check that we didn’t accidentally slip in a malloc/free sequence in the transfer path or something.

We have extensive memory checks for leaks etc in the test suite so I’m not worried about that. Those things we detect and fix immediately, even when the leaks occur in error paths – thanks to our fancy “torture tests” that do error injections.

The amount of memory needed or number of mallocs used is more of a boiling frog problem. We add one now, then another months later and a third the following year. Each added malloc call is motivated within the scope of that particular change. But taken all together, does the pattern of memory use make sense? Can we make it better?

How?

Now this is easy because when we build curl debug enabled, we have a fancy logging system (we call it memdebug) that logs all calls to “fallible” system functions so after the test is completed we can just easily grep for them and count. It also logs the exact source code and line number.

cd tests

./runtests -n [number]

egrep -c 'alloc|strdup' log/memdump

Let’s start

Let me start out with a look at the history and how many allocations (calloc, malloc, realloc or strdup) we do to complete test 103. The reason I picked 103 is somewhat random, but I wanted to look at FTP and this test happens to do an “active” transfer of content and makes a total of 10 FTP commands in the process.

The reason I decided to take a closer look at FTP this time is because I fixed an issue in the main ftp source code file the other day and that made me remember the Curl_pp_send() function we have. It is the function that sends FTP commands (and IMAP, SMTP and POP3 commands too, the family of protocols we refer to as the “ping pong protocols” internally because of their command-response nature and that’s why it has “pp” in the name).

When I reviewed the function now with my malloc police hat on, I noticed how it made two calls to aprintf(). Our printf version that returns a freshly malloced area – which can even cause several reallocs in the worst case. But this meant at least two mallocs per issued command. That’s a bit unnecessary, isn’t it?

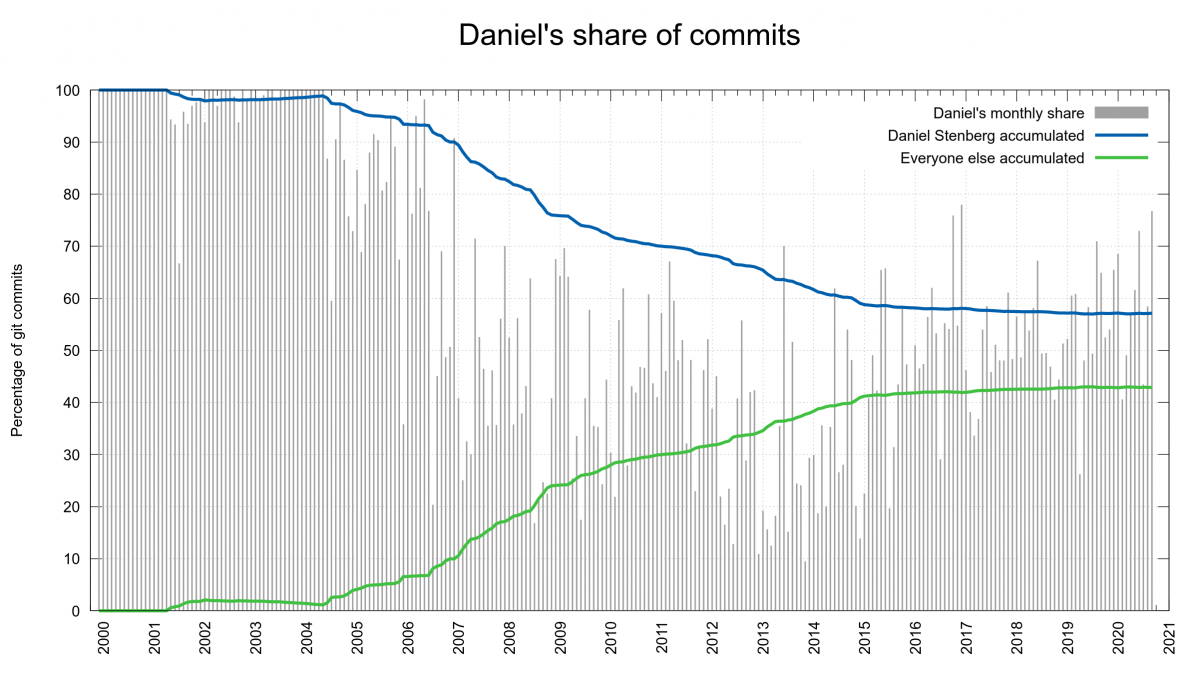

What about a few older versions

I picked a few random older versions, checked them out from git, built them and counted the number of allocs they did for test 103:

7.52.1: 141

7.68.0: 134

7.70.0: 137

7.72.0: 123

It’s been up but it has gone down too. Nothing alarming, Is that a good amount or a bad amount? We shall see…

Cleanup step one

The function gets printf style arguments and sends them to the server. The sent command also needs to append CRLF to the data. It was easy to make sure the CRLF appending wouldn’t need an extra malloc. That was just sloppy of us to have there in the first place. Instead of mallocing the new printf format string with CRLF appended, it could use one in a stack based buffer. I landed that as a first commit.

This trimmed off 10 mallocs for test 103.

Step two, bump it up a notch

The remaining malloc allocated the memory block for protocol content to send. It can be up to several kilobytes but is usually just a few bytes. It gets allocated in case it needs to be held on to if the entire thing cannot be sent off over the wire immediately. Remember, curl is non-blocking internally so it cannot just sit waiting for the data to get transferred.

I switched the malloc’ed buffer to instead use a ‘dynbuf’. That’s our internal “dynamic buffer” system that was introduced earlier this year and that we’re gradually switching all internals over to use instead of doing “custom” buffer management in various places. The internal API for dynbuf is documented here.

The internal API Curl_dyn_addf() adds a printf()-style string at the end of a “dynbuf”, and it seemed perfectly suitable to use here. I only needed to provide a vprintf() alternative since the printf() format was already received by Curl_pp_sendf()… I created Curl_dyn_vaddf() for this.

This single dynbuf is kept for the entire transfer so that it can be reused for subsequent commands and grow only if needed. Usually the initial 32 bytes malloc should be sufficient for all commands.

Not good enough

It didn’t help!

Counting the mallocs showed me with brutal clarity that my job wasn’t done there. Having dug this deep already I wasn’t ready to give this up just yet…

Why? Because Curl_dyn_addf() was still doing a separate alloc of the printf string that it then appended to the dynamic buffer. But okay, having our own printf() implementation in the code has its perks.

Add a printf() string without extra malloc

Back in May 2020 when I introduced this dynbuf thing, I converted the aprintf() code over to use dynbuf to truly unify our use of dynamically growing buffers. That was a main point with it after all.

As all the separate individual pieces I needed for this next step were already there, all I had to do was to add a new entry point to the printf() code that would accept a dynbuf as input and write directly into that (and grow it if needed), and then use that new function (Curl_dyn_vprintf) from the Curl_dyn_addf().

Phew. Now let’s see what we get…

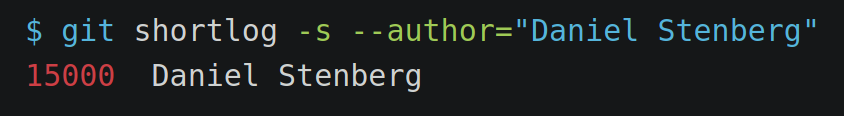

There are 10 FTP commands that previously did 2 mallocs each: 20 mallocs were spent in this function when test 103 was executed. Now we are down to the ideal case of one alloc in there for the entire transfer.

Test 103 after polish

The code right now in master (to eventually get released as 7.73.0 in a few weeks), now shows a total of 104 allocations. Down from 123 in the previous release, which not entirely surprising is 19 fewer and thus perfectly matching the logic above.

All tests and CI ran fine. I merged it. This is a change that benefits all transfers done with any of the “ping pong protocols”. And it also makes the code easier to understand!

Compared to curl 7.52.1, this is a 26% reduction in number of allocation; pretty good, but even compared to 7.72.0 it is still a 15% reduction.

More?

There is always more to do, but there’s also a question of diminishing returns. I will continue to look at curl’s memory use going forward too and make sure everything is motivated and reasonable. At least every once in a while.

I have some additional ideas for further improvements in the memory use area to look into. We’ll see if they pan out…

Don’t count on me to blog about every such finding with this level of detail! If you want to make sure you don’t miss any of these fine-tunes in the future, follow the curl github repo.

Credits

Image by Julio César Velásquez Mejía from Pixabay