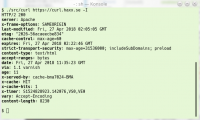

(download it from curl.haxx.se of course!)

Whatever we do and whatever we try, no matter how hard we try to test, debug, review and do CI builds it does not change the eternal truth:

Nothing gets tested properly until released.

We worked hard on fixing bugs in the weeks before we shipped curl 7.65.0. We really did. Yet, several annoying glitches managed to creep in, remain unnoticed and cause problems to users when they first eagerly tried out the new release. Those were glitches that none in the development team had experienced or discovered but only took a few hours for users to detect and report.

The initial bad sign was that it didn’t even take a full hour from the release announcement until the first bug on 7.65.0 was reported. And it didn’t stop with that issue. We obviously had a whole handful of small bugs that caused friction to users who just wanted to get the latest curl to play with. The bugs were significant and notable enough that I quickly decided we should patch them up and release an update that has them fixed: 7.65.1. So here it is!

This patch release even got delayed. Just the day before the release we started seeing weird crashes in one of the CI builds on macOS and they still remained on the morning of the release. That made me take the unusual call to postpone the release until we better understood what was going on. That’s the reason why this comes 14 days after 7.65.0 instead of a mere 7 days.

Numbers

the 182nd release

0 changes

14 days (total: 7,747)

35 bug fixes (total: 5,183)

61 commits (total: 24,387)

0 new public libcurl function (total: 80)

0 new curl_easy_setopt() option (total: 267)

0 new curl command line option (total: 221)

27 contributors, 12 new (total: 1,965)

16 authors, 6 new (total: 687)

0 security fixes (total: 89)

0 USD paid in Bug Bounties

Bug-fixes

Let me highlight some of the fixes that went this during this very brief release cycle.

build correctly with OpenSSL without MD4

This was the initial bug report, reported within an hour from the release announcement of 7.65.0. If you built and installed OpenSSL with MD4 support disabled, building curl with that library failed. This was a regression since curl already supported this and due to us not having this build combination in our CI builds we missed it… Now it should work again!

CURLOPT_LOW_SPEED_* repaired

In my work that introduces more ways to disable specific features in curl so that tiny-curl would be as small as possible, I accidentally broke this feature (two libcurl options that allow a user to stop a transfer that goes below a certain transfer speed threshold during a given time). I had added a way to disable the internal progress meter functionality, but obviously not done a good enough job!

The breakage proved we don’t have proper tests for this functionality. I reverted the commit immediately to bring back the feature, and when now I go back to fix this and land a better fix soon, I now also know that I need to add tests to verify.

multi: track users of a socket better

Not too long ago I found and fixed a pretty serious flaw in curl’s HTTP/2 code which made it deal with multiplexed transfers over the same single connection in a manner that was far from ideal. When fixed, it made curl do HTTP/2 better in some circumstances.

This improvement ended up proving itself to have a few flaws. Especially when the connection is closed when multiple streams are done over it. This bug-fix now makes curl closing down such transfers in a better and cleaner way with fewer “loose ends”.

parse_proxy: use the IPv6 zone id if given

One more zone id fix that I didn’t get around to land in 7.65.0 has now landed: specifying a proxy with a URL that includes an IPv6 numerical address and a zone id – now works.

connection “bundles” on same host but different ports

Internally, libcurl collects connections to a host + port combination in a “bundle” (that’s just a term used for this concept internally). It does this to count number of connections to this combination and enforce limits etc. It is only used a bit for controlling when multiplexing can be done or not on this host.

Due to a regression, probably added already back in 7.62.0, this logic always used the default port for the protocol instead of the actual port number used in the given URL! An application that for example did parallel HTTP transfers to the hostname “example.org” on both port 80 and port 81, and used HTTP/1 on one of the ports and HTTP/2 on the other would be totally mixed up by curl and cause transfer failures.

But not anymore!

Coming up

This patch release was not planned. We will give this release a few days to stew and evaluate the situation. If we keep getting small or big bugs reported, we might not open the feature window at all in this release cycle and instead just fix bugs.

Ideally however, we’ve now fixed the most pressing ones and we can now move on and follow our regular development process. Even if we have, the feature window for next release will be open during a shorter period than normal.

This was actually a whole set of small problems that together made the new

This was actually a whole set of small problems that together made the new  Old readers of this blog may remember my ramblings on

Old readers of this blog may remember my ramblings on