During the autumn 1996 I took my first swim in the ocean known as HTTP. Twenty years ago now.

I had previously worked with writing an IRC bot in C, and IRC is a pretty simple text based protocol over TCP so I could use some experiences from that when I started to look into HTTP. That IRC bot was my first real application distributed to the world that was using TCP/IP. It was portable to most unixes and Amiga and it was open source.

1996 was the year the movie Independence Day premiered and the single hit song that plagued the world more than others that year was called Macarena. AOL, Webcrawler and Netscape were the most popular websites on the Internet. There were less than 300,000 web sites on the Internet (compared to some 900 million today).

I decided I should spice up the bot and make it offer a currency exchange rate service so that people who were chatting could ask the bot what 200 SEK is when converted to USD or what 50 AUD might be in DEM. – Right, there was no Euro currency yet back then!

I simply had to fetch the currency rates at a regular interval and keep them in the same server that ran the bot. I just needed a little tool to download the rates over HTTP. How hard can that be? I googled around (this was before Google existed so that was not the search engine I could use!) and found a tool named ‘httpget’ that made pretty much what I wanted. It truly was tiny – a few hundred  lines of code.

lines of code.

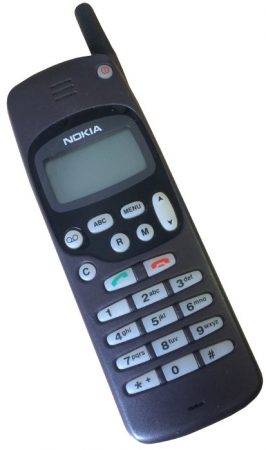

I don’t have an exact date saved or recorded for when this happened, only the general time frame. You know, we had no smart phones, no Google calendar and no digital cameras. I sported my first mobile phone back then, the sexy Nokia 1610 – viewed in the picture on the right here.

The HTTP/1.0 RFC had just recently came out – which was the first ever real spec published for HTTP. RFC 1945 was published in May 1996, but I was blissfully unaware of the youth of the standard and I plunged into my little project. This was the first published HTTP spec and it says:

HTTP has been in use by the World-Wide Web global information initiative since 1990. This specification reflects common usage of the protocol referred too as "HTTP/1.0". This specification describes the features that seem to be consistently implemented in most HTTP/1.0 clients and servers.

Many years after that point in time, I have learned that already at this time when I first searched for a HTTP tool to use, wget already existed. I can’t recall that I found that in my searches, and if I had found it maybe history would’ve made a different turn for me. Or maybe I found it and discarded for a reason I can’t remember now.

I wasn’t the original author of httpget; Rafael Sagula was. But I started contributing fixes and changes and soon I was the maintainer of it. Unfortunately I’ve lost my emails and source code history from those earliest years so I cannot easily show my first steps. Even the oldest changelogs show that we very soon got help and contributions from users.

The earliest saved code archive I have from those days, is from after we had added support for Gopher and FTP and renamed the tool ‘urlget’. urlget-3.5.zip was released on January 20 1998 which thus was more than a year later my involvement in httpget started.

The original httpget/urlget/curl code was stored in CVS and it was licensed under the GPL. I did most of the early development on SunOS and Solaris machines as my first experiments with Linux didn’t start until 97/98 something.

The first web page I know we have saved on archive.org is from December 1998 and by then the project had been renamed to curl already. Roughly two years after the start of the journey.

RFC 2068 was the first HTTP/1.1 spec. It was released already in January 1997, so not that long after the 1.0 spec shipped. In our project however we stuck with doing HTTP 1.0 for a few years longer and it wasn’t until February 2001 we first started doing HTTP/1.1 requests. First shipped in curl 7.7. By then the follow-up spec to HTTP/1.1, RFC 2616, had already been published as well.

The IETF working group called HTTPbis was started in 2007 to once again refresh the HTTP/1.1 spec, but it took me a while until someone pointed out this to me and I realized that I too could join in there and do my part. Up until this point, I had not really considered that little me could actually participate in the protocol doings and bring my views and ideas to the table. At this point, I learned about IETF and how it works.

I posted my first emails on that list in the spring 2008. The 75th IETF meeting in the summer of 2009 was held in Stockholm, so for me still working on HTTP only as a spare time project it was very fortunate and good timing. I could meet a lot of my HTTP heroes and HTTPbis participants in real life for the first time.

I have participated in the HTTPbis group ever since then, trying to uphold the views and standpoints of a command line tool and HTTP library – which often is not the same as the web browsers representatives’ way of looking at things. Since I was employed by Mozilla in 2014, I am of course now also in the “web browser camp” to some extent, but I remain a protocol puritan as curl remains my first “child”.

Over time, I’ve reluctantly come to terms with the fact that a lot of questions and answers about curl is not done on the mailing lists we have setup in the project itself.

Over time, I’ve reluctantly come to terms with the fact that a lot of questions and answers about curl is not done on the mailing lists we have setup in the project itself. But still. 25,000 questions. Wow.

But still. 25,000 questions. Wow.

Originally, curl on Mac was built against OpenSSL for the TLS and SSL support, but over time our friends at Apple have switched more and more of their software over to use their own TLS and crypto library

Originally, curl on Mac was built against OpenSSL for the TLS and SSL support, but over time our friends at Apple have switched more and more of their software over to use their own TLS and crypto library  I decided I’d show up a little early at the Sheraton as I’ve been handling the interactions with hotel locally here in Stockholm where the workshop will run for the coming three days. Things were on track, if we ignore how they got the wrong name of the workshop on the info screens in the lobby, instead saying “Haxx Ab”…

I decided I’d show up a little early at the Sheraton as I’ve been handling the interactions with hotel locally here in Stockholm where the workshop will run for the coming three days. Things were on track, if we ignore how they got the wrong name of the workshop on the info screens in the lobby, instead saying “Haxx Ab”…