I’ve talked on this topic before but I realized I never did a proper blog post on the topic. So here it is: how we develop curl to keep it safe. The topic of supply chain security is one that is discussed frequently these days and every so often there’s a very well used (open source) component that gets a terrible weakness revealed.

Don’t get me wrong. Proprietary packages have their share of issues as well, and probably even more so, but for obvious reasons we never get the same transparency, details and insight into those problems and solutions.

curl

curl, in the shape of libcurl primarily, is one of the world’s most commonly used software components. It is installed in somewhere around ten billion installations world wide. It might even be forty billion. Nobody knows.

If we would find a critical vulnerability in curl, it could potentially exist in every internet-connected device on the globe. We don’t want that.

A critical security flaw in our products would be bad, but we also similarly need to make sure that we provide APIs and help users of our products to be safe and to use curl safely. To make sure users of libcurl don’t accidentally end up getting security problems, to the best of our ability.

In the curl project, we work hard to never have our own version of a “heartbleed moment“. How do we do this?

Always improving

Our method is not strange, weird or innovative. We simply apply all best practices, tools and methods that are available to us. In all areas. As we go along, we tighten the screws and improve our procedures, learning from past mistakes.

There are no short cuts or silver bullets. Just hard work and running tools.

Not a coincidence

Getting safe and secure code into your product is not something that happens by chance. We need to work on it and we need to make a concerned effort. We must care about it.

We all know this and we all know how to do it, we just need to make sure that we also actually do it.

The steps

- Write code following the rules

- Review written code and make sure it is clear and easy to read.

- Test the code. Before and after merge

- Verify the products and APIs to find cracks

- Bug-bounty to reward outside helpers

- Act on mistakes – because they will happen

Writing

For users of libcurl we provide an API with safe and secure defaults as we understand the power of the default. We also document everything with details and take great pride in having world-class documentation. To reduce the risk of applications becoming unsafe just because our API was unclear.

We also document internal APIs and functions to help contributors write better code when improving and changing curl.

We don’t allow compiler warnings to remain – on any platform. This is sometimes quite onerous since we build on such a ridiculous amount of systems.

We encourage use of source code comments and assert()s to make assumptions obvious. (curl is primarily written in C.)

Review

All code should be reviewed. Maintainers are however allowed to review and merge their own pull-requests for practical reasons.

Code should be easy to read and understand. Our code style must be followed and encourages that: for example, no assignments in conditions, one statement per line, no lines longer than 80 columns and more.

Strict compliance with the code style also means that the code gets a flow and a consistent look, which makes it easier to read and manage. We have a tool that verifies most aspects of the code style, which takes away most of that duty away from humans. I find that PR authors generally take code style remarks better when pointed out by a tool than when humans do it.

A source code change is accompanied with a git commit message that need to follow the template. A consistent commit message style makes it easier to later come back and understand it proper when viewing source code history.

Test

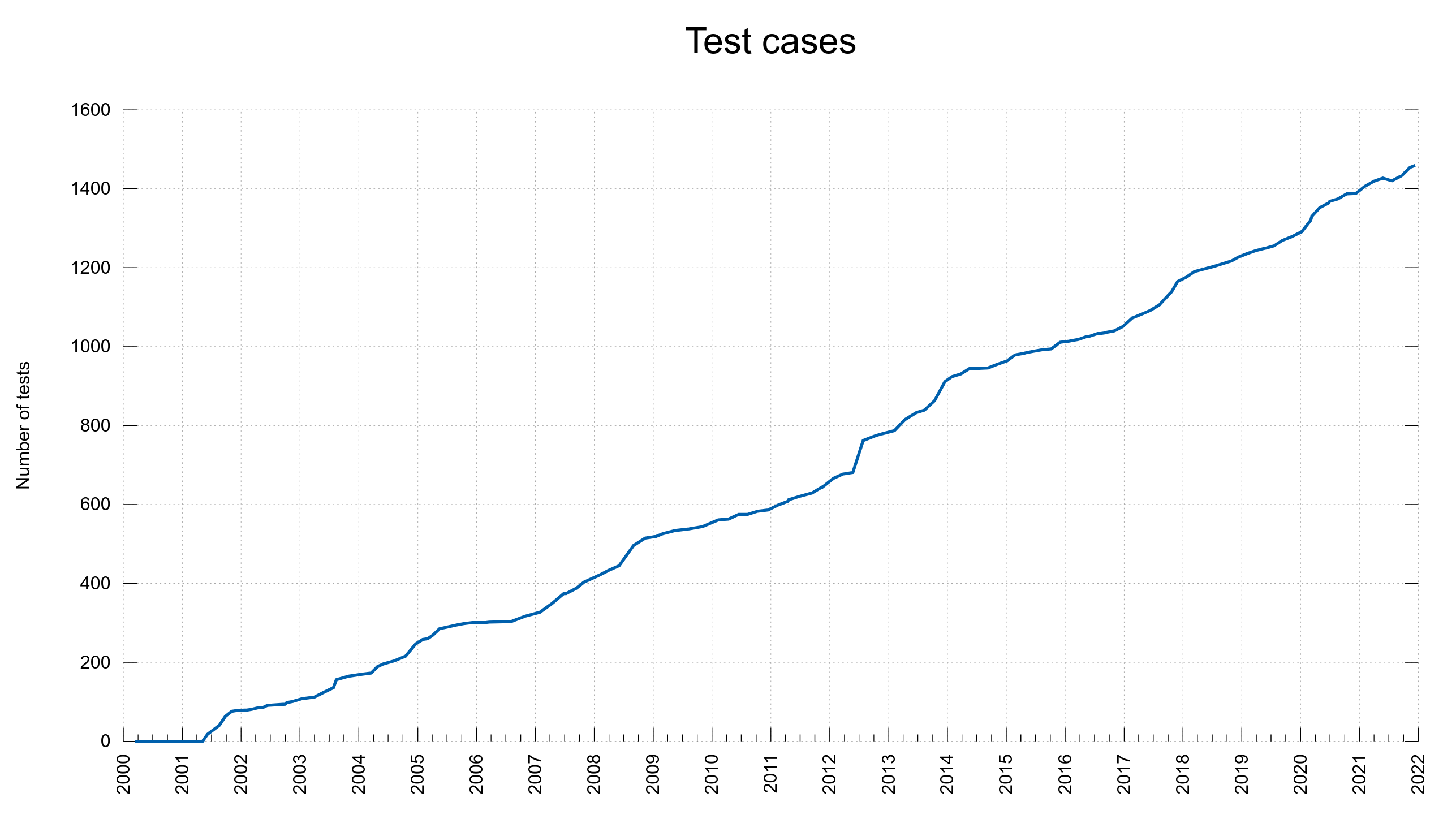

We want everything tested.

- Unit tests. We strive at writing more and more unit tests of internal functions to make sure they truly do what expected.

- System tests. Do actual network transfers against test servers, and make sure different situations are handled.

- Integration tests. Test libcurl and its APIs and verify that they handle what they are expected to.

- Documentation tests. Check formats, check references and cross-reference with source code, check lists that they include all items, verify that all man pages have all sections, in the same order and that they all have examples.

- “Fix a bug? Add a test!” is a mantra that we don’t always live up to, but we try.

curl runs on 80+ operating systems and 20+ CPU architectures, but we only run tests on a few platforms. This usually works out fine because most of the code is written to run on multiple platforms so if tested on one, it will also run fine on all the other.

curl has a flexible build system that offers many million different build combinations with over 30 different possible third-party libraries in countless version combinations. We cannot test all build combos, but we try to test all the popular ones and at least one for each config option enabled and disabled.

We have many tests, but there are unfortunately still gaps and details not tested by the test suite. For those things we simply have to rely on the code review and then that users report problems in the shipped products.

Verify

We run all the tests using valgrind to make sure nothing leaks memory or do bad memory accesses.

We build and run with address, undefined behavior and integer overflow sanitizers.

We are part of the OSS-Fuzz project which fuzzes curl code non-stop, and we run CIFuzz in CI builds, which runs “a little” fuzzing on the curl code in the normal pull-request process.

We do “torture testing“: run a test case once and count the number of “fallible” function calls it makes. Those are calls to memory allocation, file operations, socket read/write etc. Then re-run the test that many times, and for each new iteration we make another one of the fallible functions fail and return error. Verify that no memory leaks or crashes occur. Do this on all tests.

We use several different static code analyzers to scan the code checking for flaws and we always fix or otherwise handle every reported defect. Many of them for each pull-request and commit, some are run regularly outside of that process:

- scan-build

- clang tidy

- lgtm

- CodeQL

- Lift

- Coverity

The exact set has varied and will continue to vary over time as services come and go.

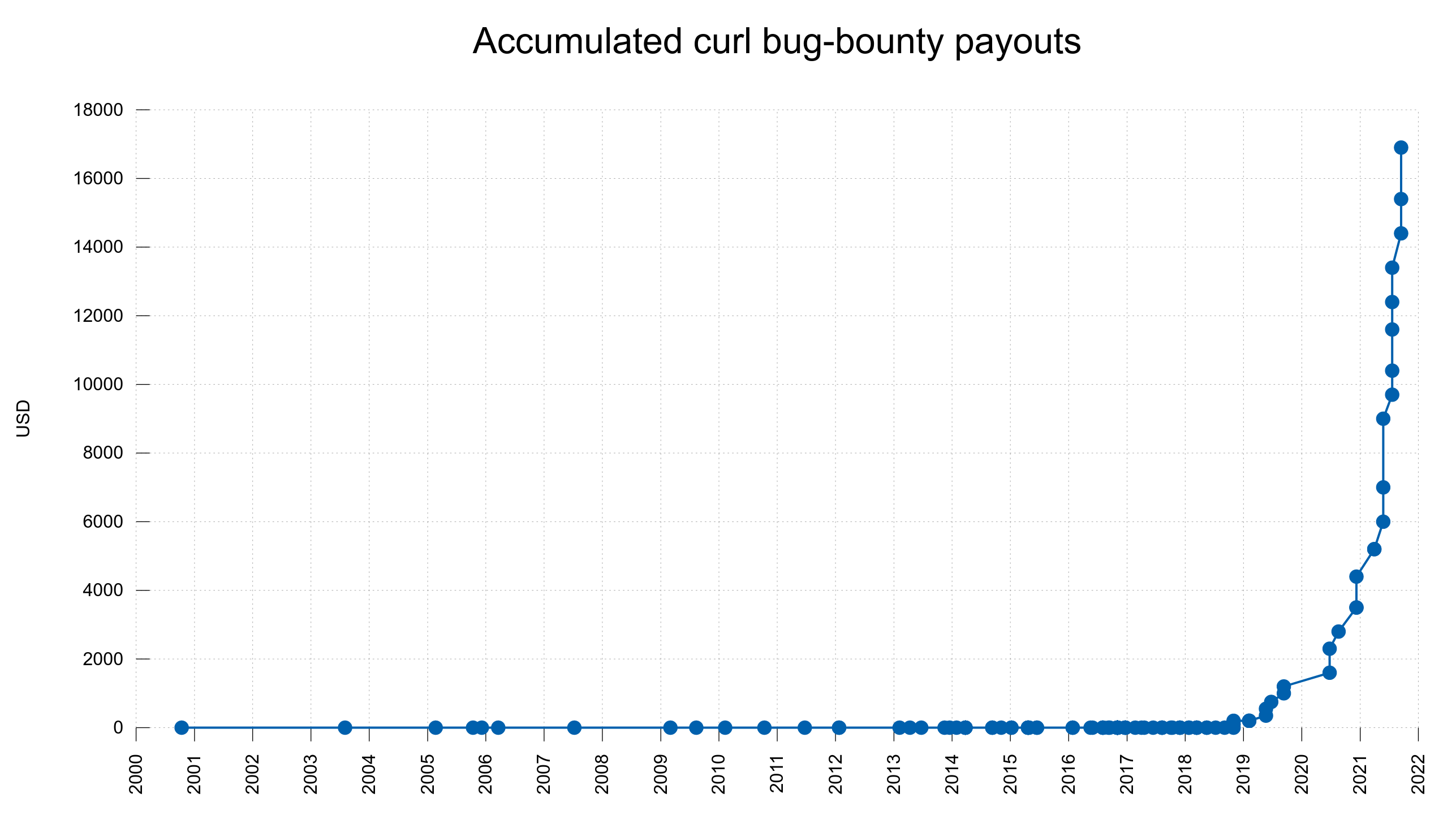

Bug-bounty

No matter how hard we try, we still ship bugs and mistakes. Most of them of course benign and harmless but some are not. We run a bug-bounty program to reward security searchers real money for reported security vulnerabilities found in curl. Until today, we have paid almost 17,000 USD in total and we keep upping the amounts for new findings.

When we report security problems, we produce detailed and elaborate advisories to help users understand every subtle detail about the problem and we provide overview information that shows exactly what versions are vulnerable to which problems. The curl project aims to also be a world-leader in security advisories and related info.

Act on mistakes

We are not immune, no matter how hard we try. Bad things will happen. When they do, we:

- Act immediately.

- Own the problem, responsibly

- Fix it and announce it – as soon as possible

- Learn from it

- Make it harder to do the same or similar mistakes again

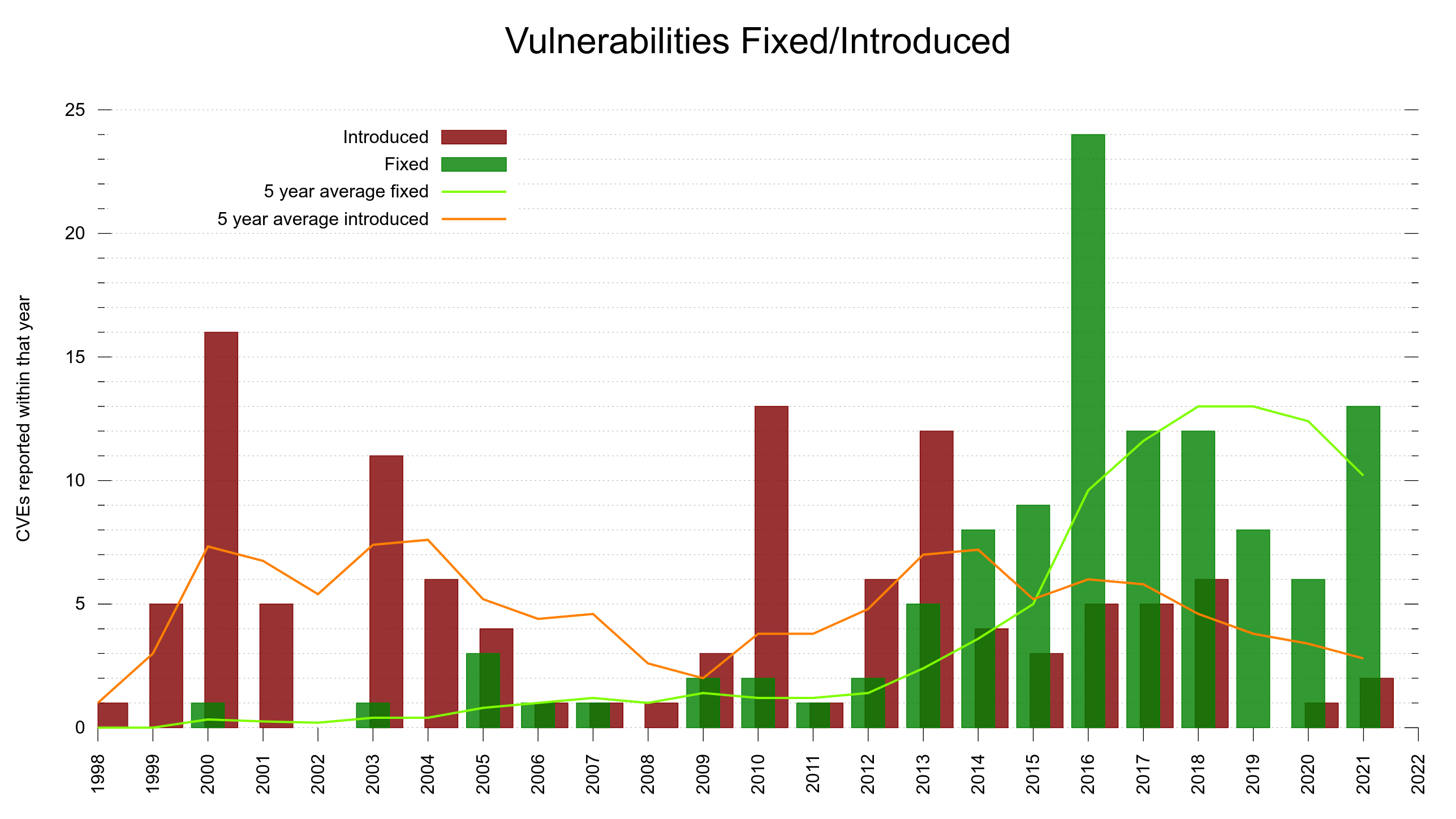

Does it work? Do we actually learn from our history of mistakes? Maybe. Having our product in ten billion installations is not a proof of this. There are some signs that might show we are doing things right:

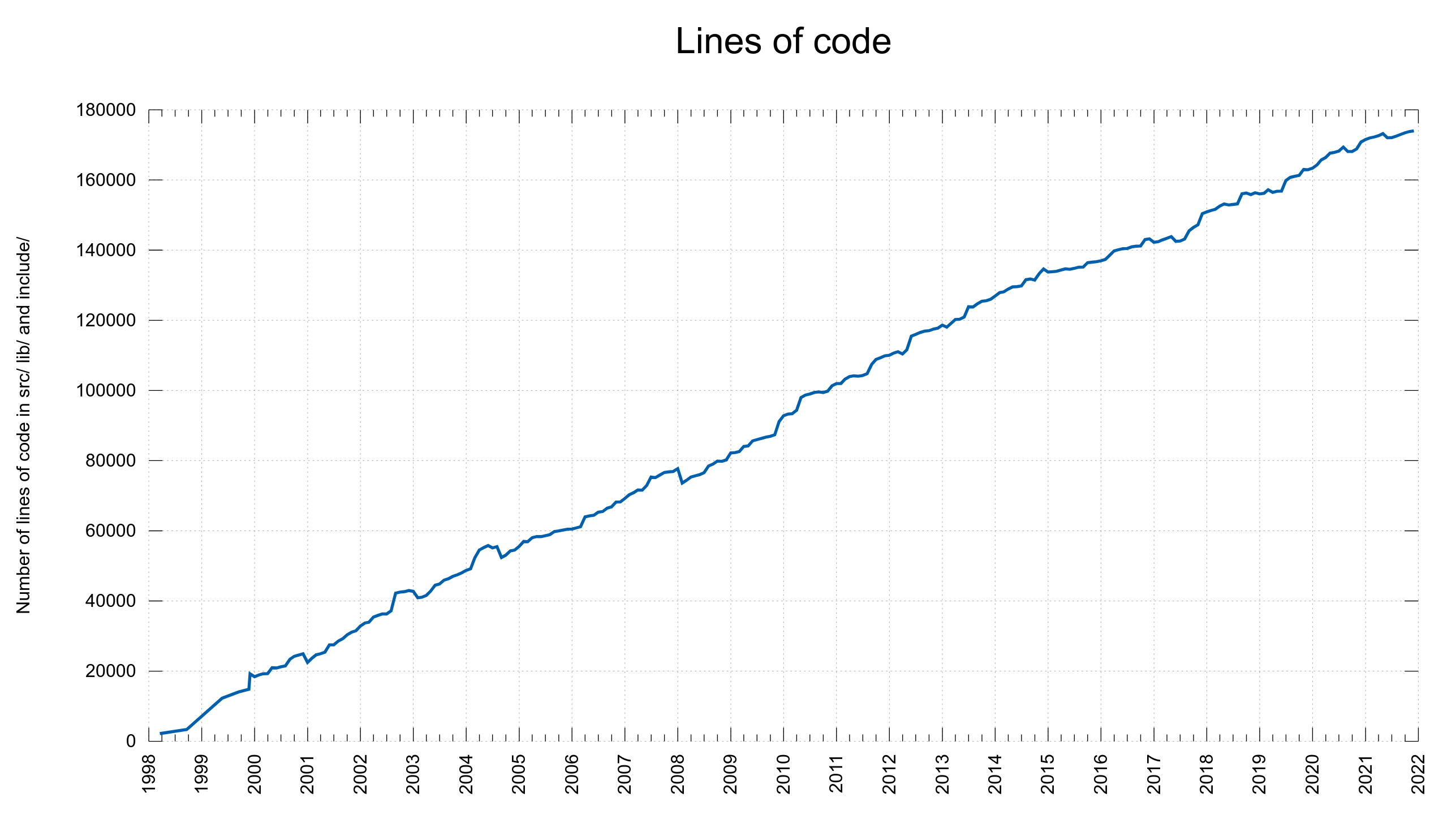

- We were reporting fewer CVEs/year the last few years but in 2021 we went back up. It could also be the result of more people looking, thanks to the higher monetary rewards offered. At the same time the number of lines of code have kept growing at a rate of around 6,000 lines per year.

- We get almost no issues reported by OSS-Fuzz anymore. The first few years it ran it found many problems.

- We are able to increase our bug-bounty payouts significantly and now pay more than one thousand USD almost every time. We know people are looking hard for security bugs.

Continuous Integration

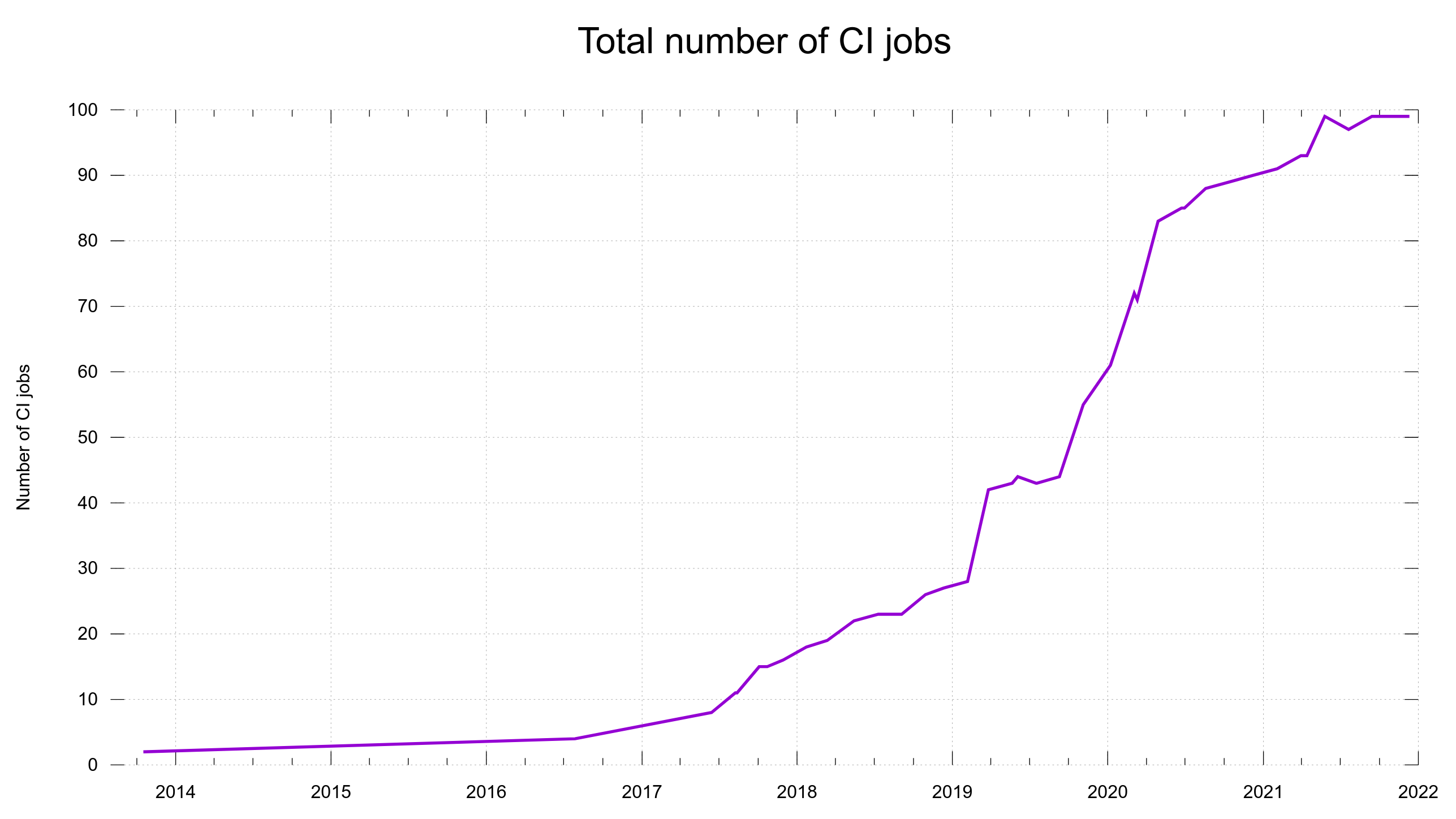

For every pull-request and commit done in the project, we run about 100 different builds + test rounds.

- Test code style

- Run thousands of tests per build

- Build and test on tens of platforms

- Over twenty hours of CPU time per commit

- Done using several different CI services for maximum performance, widest possible coverage and shortest time to completion.

We currently use the following CI services: Cirrus CI, AppVeyor, Azure Pipelines, GitHub Actions, Circle CI and Zuul CI.

We also have a separate autobuild system with systems run by volunteers that checkout the latest code, build, run all the tests and report back in a continuous manner a few times or maybe once per day.

New habits past mistakes have taught us

We have done several changes to curl internals as direct reactions to past security vulnerabilities and their root causes. Lessons learned.

Unified dynamic buffer functions

These days we have a family of functions for working with dynamically sized buffers. Be using the same set for this functionality we have it well tested and we reduce the risk that new code messes up. Again, nothing revolutionary or strange, but as curl had grown organically over the decades, we found ourselves in need of cleaning this up one day. So we did.

Maximum string sizes

Several past mistakes came from possible integer overflows due to libcurl accepting input string sizes of unrestricted lengths and after doing operations on such string sizes, they would sometimes lead to overflows.

Since a few years back now, no string passed to curl is allowed to be larger than eight megabytes. This limit is somewhat arbitrarily set but is meant to be way larger than the largest user names and passwords ever used etc. We could also update the limit in a future, should we want. It’s not a limit that is exposed in the API or even mentioned. It is there to trap mistakes and malicious use.

Avoid reallocs

Thanks to the previous points we now avoid realloc as far as possible outside of those functions. History shows that realloc in combination with integer overflows have been troublesome for us. Now, both reallocs and integer overflows should be much harder to mess up.

Code coverage

A few years ago we ran code coverage reports for one build combo on one platform. This generated a number that really didn’t mean a lot to anyone but instead rather mislead users to drawing funny conclusions based on the report. We stopped that. Getting a “complete” and representative number for code coverage for curl is difficult and nobody has yet gone back to attempt this.

The impact of security problems

Every once in a while someone discovers a security problem in curl. To date, those security vulnerabilities have been limited to certain protocols and features that are not used by everyone and in many cases even disabled at build-time among many users. The issues also often rely on either a malicious user to be involved, either locally or remotely and for a lot of curl users, the environments it runs in limit that risk.

To date, I’m not aware of any curl user, ever, having been seriously impacted by a curl security problem.

This is not a guarantee that it will not ever happen. I’m only stating facts about the history so far. Security is super hard and I can only promise that we will keep working hard on shipping secure products.

Is it scary?

Changes done to curl code today will end up in billions of devices within a few years. That’s an intimidating fact that could truly make you paralyzed by fear of the risk that the world will “burn” due to a mistake of mine.

Rather than instilling fear by this outlook, I think the proper way to think it about it, is respecting the challenge and “shouldering the responsibility”. Make the changes we deem necessary, but make them according to the guidelines, follow the rules and trust that the system we have setup is likely to detect almost every imaginable mistake before it ever reaches a release tarball. Of course we plug holes in the test suite that we spot or suspect along the way.

The back-door threat

I blogged about that recently. I think a mistake is much more likely to slip-in and get shipped to the world than a deliberate back-door is.

Memory safe components might help

By rewriting parts of curl to use memory safe components, such as hyper for HTTP, we might be able to further reduce the risk of future vulnerabilities. That’s a long game to make reality. It will also be hard in the future to actually measure and tell for sure if it truly made an impact.

How can you help out?

- Pay for a curl support contract. This is what enables me to work full time on curl.

- Help out with reviews and adding new tests to curl

- Help out with fixing issues and improving the code

- Sponsor curl

- Report all bugs you find

- Upgrade your systems to run modern curl versions

Credits

Image by Dorian Krauss from Pixabay

Great post, thanks for sharing!

I’m curious about the torture testing part; how was it implemented? Can it be reused in other projects?

@DJ: It is implemented with a bunch of macros that change all code to instead call the memdebug-versions of the function calls. Those functions then either return an error or call the “real” function. You can find the curl code for this in lib/memdebug.[ch]. Like here.

It can be reused, but it hasn’t been written to be easily extracted and repurposed so it will require at least some manual hands on. And for other languages than C or C++, the logic probably needs to be done differently.

The memdebug code we have is also used to log/trace memory usage, so that we can check in post if all memory was actually released. A “poor mans version” of valgrind if you will. That works on many more systems and environments than valgrind does.