![]() We started off this second embedded hacking day (the first one being the one we had in October) when I sent out the invitation email on April 22nd asking people to sign up. We limited the number of participants to 40, and within two hours all seats had been taken! Later on I handed out more tickets so we ended up with 49 people on the list and interestingly enough only 13 of these were signed up for the previous event as well so there were quite a lot of newcomers.

We started off this second embedded hacking day (the first one being the one we had in October) when I sent out the invitation email on April 22nd asking people to sign up. We limited the number of participants to 40, and within two hours all seats had been taken! Later on I handed out more tickets so we ended up with 49 people on the list and interestingly enough only 13 of these were signed up for the previous event as well so there were quite a lot of newcomers.

Arrival

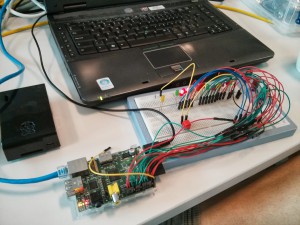

At 10 in the morning on Saturday June 1st, the first people had already arrived and more visitors were dropping in one by one. They would get a goodie-bag from our gracious host with t-shirt (it is the black one you can see me wearing on the penguin picture on the left), some information and a giveaway thing. This time we unfortunately did not have a single female among the attendees, but the all-male crowd would spread out in the room and find seating, power and switches to use. People brought their laptops and we soon could see a very wide range of different devices, development boards and early design ideas showing up on the tables. Blinking leds and cables everywhere. Exactly the way we like it!

Giveaway

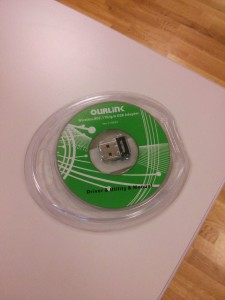

We decided pretty early on the planning for this event that we wouldn’t give away a Raspberry Pi again like we did last time. Not that it was a bad thing to give away, it was actually just a perfect gift, but simply because we had already done that and wanted to do something else and we reasoned that by now a lot of this audience already have a Raspberry pi or similar device.

So, we then came up with a little device that could improve your Raspberry Pi or similar board: a USB wifi thing with Linux drivers so that you easily can add wifi capabilities to your toy projects!

And in order to provide something that you can actually hack on during the event, we decided to give away an Arduino Nano version. Unfortunately, the delivery gods were not with us or perhaps we had forgot to sacrifice the correct animal or something, so this second piece didn’t arrive in time. Instead we gathered people’s postal addresseAns and once the package arrives in a couple of days we will send it out to all attendees. Sort of a little bonus present afterwards. Not the ideal situation, but hey, we did our best and I think this is at least a decent work-around.

So the fun begun

In the big conference room next to the large common room, I said welcome to everyone at 11:00 before I handed over to Magnus from Xilinx to talk about Xilinx Zynq and combining ARM and FPGAs.  The crowd proved itself from the first minute and Magnus got a flood of questions immediately. Possibly it was also due to the lovely combo that Magnus is primarily a HW-guy while the audience perhaps was mostly SW-persons but with an interest in lowlevel stuff and HW and how to optimize embedded systems etc.

The crowd proved itself from the first minute and Magnus got a flood of questions immediately. Possibly it was also due to the lovely combo that Magnus is primarily a HW-guy while the audience perhaps was mostly SW-persons but with an interest in lowlevel stuff and HW and how to optimize embedded systems etc.

After this initial talk, lunch was served.

Contest

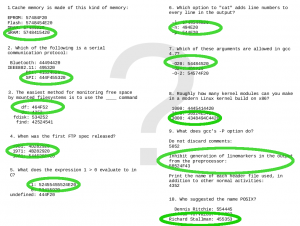

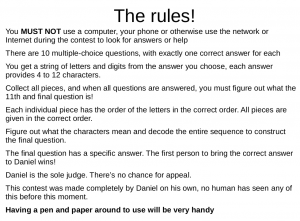

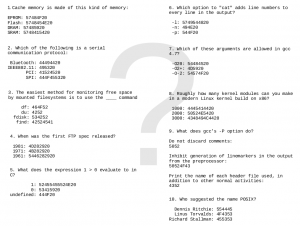

I got lots of positive feedback the last time on the contest I made then, so I made one this time around as well and it was fun again. See my separate post on the contest details.

Flying

After the dust had settled and everyones’ pulses had started to go back to normal again after the contest, Björn Stenberg “took the stage” at 14:00 and educated us all in how you can use 7 Arduinos when flying an R/C plane.

It seemed as if Björn’s talk really hit home among many people in the audience and there was much talking and extra interest in Björn’s large pile of electronics and “stuff” that he had brought with him to show off. The final video Björn showed during his talk can be found here.

Stuff to eat

People actually want to get something done too during a day like this so we can’t make it all filled up with talks. Enea provided candy, drinks and buns. And of course coffee and water during the entire day.

People actually want to get something done too during a day like this so we can’t make it all filled up with talks. Enea provided candy, drinks and buns. And of course coffee and water during the entire day.

Even with buns and several coffee refills, I think people were slowly getting soft in their brains when the afternoon struck and to really make people wake up, we hit them with Erik Alapää’s excellent talk…

Aliasing in C and C++

Or as Erik specified the full title: “Aliasing in C99/C++11 and data transfer between hard real-time systems on modern RISC processors”…

Erik helped put the light on some sides of the C programming language that perhaps aren’t the most used or understood. How aliasing can be used and what pitfalls it can send us down into!

Personally I don’t really had a lot of time or comfort to get much done this day other than making sure everything ran smooth and that everyone was happy and the schedule was kept. My original hopes was to get some time to do some debugging on a few of my projects during the day but I failed that ambition…

Personally I don’t really had a lot of time or comfort to get much done this day other than making sure everything ran smooth and that everyone was happy and the schedule was kept. My original hopes was to get some time to do some debugging on a few of my projects during the day but I failed that ambition…

We made sure to videofilm all the talks so we should hopefully be able to provide online versions of them later on.

Real-time Linux

I took the last speaker slot for the day. I think lots of brains were soft by then, and a few people had already started to drop off. I talked for a while generically about how the real-time problem (or perhaps low-latency) is being handled with Linux these days and explained a bit about PREEMPT_RT and full dynamic ticks and what the differences of the methods are.

The end

At 20:00 we forced everyone out of the facilities. A small team of us grabbed a bite and a couple of beers to digest the day and to yap just a little bit more before we split up for the evening and took off home…

Thank you everyone who was there for making it another great event. Thank you all speakers for giving the event the extra brightness! Thank you Enea for sponsoring, hosting and providing all the goodies in such an elegant manner! It is indeed possible that we make a 3rd embedded hacking day in the future…

curl first implemented cookie support way back in the early days in the late 90s. I participated in the IETF work that much later

curl first implemented cookie support way back in the early days in the late 90s. I participated in the IETF work that much later

My “landline” phone in my house is connected over

My “landline” phone in my house is connected over

In this little piece I’ll explain why there won’t be any version 8 of curl and libcurl in a long time. I won’t rule out that it might happen at some point in the future. Just that it won’t happen anytime soon and explain the reasons why.

In this little piece I’ll explain why there won’t be any version 8 of curl and libcurl in a long time. I won’t rule out that it might happen at some point in the future. Just that it won’t happen anytime soon and explain the reasons why.