… the slides from my talk today at Techdays by Init in Stockholm:

… the slides from my talk today at Techdays by Init in Stockholm:

The curl project has its roots in the late 1996, but we haven’t kept track of all of the early code history. We imported our code to Sourceforge late 1999 and that’s how far back we can see in our current git repository. The exact date is “Wed Dec 29 14:20:26 UTC 1999”. So, almost 14 years of development.

Warning: this blog post contains more useless info and graphs than many mortals can handle. Be aware!

How much old code remain in the current source tree? Or perhaps put differently: how is the refresh rate of the code? We fix bugs, we change things, we add features. Surely we’ll slowly over time rewrite the old code and replace it with new more shiny and better working code? I decided to check this. Here’s what I found!

We have all code in git. ‘git blame’ is the primary tool I used as it lists all lines of all source code and tells us when it was added. I did some additional perl scripting around it.

I decided to check all code in the src/ and lib/ directories in the curl and libcurl source tree. The source code is used to create both the curl tool and the libcurl library and back in 1999 there was no libcurl like today so we do get a slightly better coverage of history this way.

In total this sums up to some 112000 lines in the current .c and .h files.

To count the total amount of commits done to those specific files through history I ran:

git log --oneline src/*.[ch] lib/*.[ch] | wc -l

6047 commits in total. (if I don’t specify the files and count all commits in the repo it ends up at 16954)

We run gitstats on the curl repo every day so you can go there for some more and current stats. Right now it tells us that average number of commits is 4.7 per active day (that means days when actually something was committed), or 3.4 per all days over the entire time. There was git activity 3576 days in total. By 224 authors.

How much of the code would you think still remains that were present already that December day 1999?

How much of the code in the current code base would you think was written the last few years?

I wanted to see how much old code that exists, or perhaps how the age of the code is represented in the current code base. I decided to therefore base my logic on the author time that git tracks. It is basically the time when the author of a change commits it to his/her local tree as then the change can be applied later on by a committer that can be someone else, but the author time remains the same. Sometimes a committer commits multiple patches at once, possibly at a much later time etc so I figured the author time would be a better time stamp. I also decided to track the date instead of just the commit hash so that I can sort the changes properly and also make interesting graphs that are based on that time. I use the time with a second precision so changes done a second apart will be recorded as two separate changes while two commits done with the same author time stamp will be counted as the same time.

I had my script run ‘git blame –line-porcelain’ for all files and had my script sum up all changes done on the same time.

The code base contains changes written at 4147 different times. Converted to UTC times, they happened on 2076 unique days. On 167 unique months. That’s every month since the beginning.

We’re talking about 312 files.

A graph with changes over time. The Y axis is number of lines that were changed on that particular time. (click for higher res)

Ok you object, that doesn’t look very appealing. So here’s the same data but with all the changes accumulated over time.

Do you think the same as I do? Isn’t it strangely linear? It seems that the number of added lines that remain in the code today is virtually the same over time! But fair enough, the changes in the X axis are not distributed according to the time/date they represent so we shouldn’t be fooled by the time, but certainly we can see that changes in general only bring in a certain amount of surviving modified lines.

Another way to count the changes is then to check all the ~4000 change times of the present code, and see how many days between them there are:

Ah, now finally we’re seeing something. Older code that is still present clearly was made with longer periods in between the changes that have lasted. It makes perfect sense to me, since the many years of development probably have later overwritten a lot of code that was written in between.

Also, it is clearly that among the more recent changes that have survived they were often done on the same day or just a few days away from another lasting change.

The number of modified lines split up on the individual year the change came in.

Interesting! The general trend is clear and not surprising. Two years stand out from the trend, 2004 and 2011. I have not yet investigated what particular larger changes that were made those years that have survived. The bump for 1999 is simply the original import and most of those lines are preprocessor lines like #ifdef and #include or just opening and closing braces { and }.

Splitting up the number of surviving lines on the specific year+month they were added:

This helps us analyze the previous chart. As we can see, the rather tall bars from 2004 and 2011 are actually several months wide and explains the bumps in the year-chart. Clearly we made some larger effort on those periods that were good enough to still remain in the code.

So, can we perhaps see if some years’ more activity in number of added or removed source lines can be tracked back to explain the number of surviving source code lines? I ran “git diff [hash1]..[hash2] –stat — lib/*.[ch] src/*.[ch]” for all years to get a summary of number of added and removed source code lines that year. I added those number to the table with surviving lines and then I made another graph:

Funnily enough, we see almost an exact correlation there for the first eight years and then the pattern breaks. From the year 2009 the number of removed lines went down but still the amount of surving lines went up quite a bit and then the graphs jump around a bit.

My interpretation of this graph is this boring: the amount of surviving code in absolute numbers is clearly correlating to the amount of added code. And that we removed more code yearly in the 2000-2003 period than what has survived.

But notice how the blue line is closing the gap to the orange/red one over time, which means that percentage wise there’s more surviving code in more recent code! How much?

Here’s the amount of surviving lines/added lines and a second graph looking at surviving lines/(added + removed) to see if the mere source code activity would be a more suitable factor to compare against…

Code committed within the last 5 years are basically 75% left but then it goes downhill down to the 18% survival rate of the 1999 code import.

If you can think of other good info to dig out, let me know!

![]() We started off this second embedded hacking day (the first one being the one we had in October) when I sent out the invitation email on April 22nd asking people to sign up. We limited the number of participants to 40, and within two hours all seats had been taken! Later on I handed out more tickets so we ended up with 49 people on the list and interestingly enough only 13 of these were signed up for the previous event as well so there were quite a lot of newcomers.

We started off this second embedded hacking day (the first one being the one we had in October) when I sent out the invitation email on April 22nd asking people to sign up. We limited the number of participants to 40, and within two hours all seats had been taken! Later on I handed out more tickets so we ended up with 49 people on the list and interestingly enough only 13 of these were signed up for the previous event as well so there were quite a lot of newcomers.

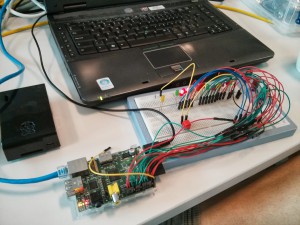

At 10 in the morning on Saturday June 1st, the first people had already arrived and more visitors were dropping in one by one. They would get a goodie-bag from our gracious host with t-shirt (it is the black one you can see me wearing on the penguin picture on the left), some information and a giveaway thing. This time we unfortunately did not have a single female among the attendees, but the all-male crowd would spread out in the room and find seating, power and switches to use. People brought their laptops and we soon could see a very wide range of different devices, development boards and early design ideas showing up on the tables. Blinking leds and cables everywhere. Exactly the way we like it!

We decided pretty early on the planning for this event that we wouldn’t give away a Raspberry Pi again like we did last time. Not that it was a bad thing to give away, it was actually just a perfect gift, but simply because we had already done that and wanted to do something else and we reasoned that by now a lot of this audience already have a Raspberry pi or similar device.

So, we then came up with a little device that could improve your Raspberry Pi or similar board: a USB wifi thing with Linux drivers so that you easily can add wifi capabilities to your toy projects!

And in order to provide something that you can actually hack on during the event, we decided to give away an Arduino Nano version. Unfortunately, the delivery gods were not with us or perhaps we had forgot to sacrifice the correct animal or something, so this second piece didn’t arrive in time. Instead we gathered people’s postal addresseAns and once the package arrives in a couple of days we will send it out to all attendees. Sort of a little bonus present afterwards. Not the ideal situation, but hey, we did our best and I think this is at least a decent work-around.

In the big conference room next to the large common room, I said welcome to everyone at 11:00 before I handed over to Magnus from Xilinx to talk about Xilinx Zynq and combining ARM and FPGAs.  The crowd proved itself from the first minute and Magnus got a flood of questions immediately. Possibly it was also due to the lovely combo that Magnus is primarily a HW-guy while the audience perhaps was mostly SW-persons but with an interest in lowlevel stuff and HW and how to optimize embedded systems etc.

The crowd proved itself from the first minute and Magnus got a flood of questions immediately. Possibly it was also due to the lovely combo that Magnus is primarily a HW-guy while the audience perhaps was mostly SW-persons but with an interest in lowlevel stuff and HW and how to optimize embedded systems etc.

After this initial talk, lunch was served.

I got lots of positive feedback the last time on the contest I made then, so I made one this time around as well and it was fun again. See my separate post on the contest details.

After the dust had settled and everyones’ pulses had started to go back to normal again after the contest, Björn Stenberg “took the stage” at 14:00 and educated us all in how you can use 7 Arduinos when flying an R/C plane.

It seemed as if Björn’s talk really hit home among many people in the audience and there was much talking and extra interest in Björn’s large pile of electronics and “stuff” that he had brought with him to show off. The final video Björn showed during his talk can be found here.

People actually want to get something done too during a day like this so we can’t make it all filled up with talks. Enea provided candy, drinks and buns. And of course coffee and water during the entire day.

People actually want to get something done too during a day like this so we can’t make it all filled up with talks. Enea provided candy, drinks and buns. And of course coffee and water during the entire day.

Even with buns and several coffee refills, I think people were slowly getting soft in their brains when the afternoon struck and to really make people wake up, we hit them with Erik Alapää’s excellent talk…

Or as Erik specified the full title: “Aliasing in C99/C++11 and data transfer between hard real-time systems on modern RISC processors”…

Erik helped put the light on some sides of the C programming language that perhaps aren’t the most used or understood. How aliasing can be used and what pitfalls it can send us down into!

Personally I don’t really had a lot of time or comfort to get much done this day other than making sure everything ran smooth and that everyone was happy and the schedule was kept. My original hopes was to get some time to do some debugging on a few of my projects during the day but I failed that ambition…

Personally I don’t really had a lot of time or comfort to get much done this day other than making sure everything ran smooth and that everyone was happy and the schedule was kept. My original hopes was to get some time to do some debugging on a few of my projects during the day but I failed that ambition…

We made sure to videofilm all the talks so we should hopefully be able to provide online versions of them later on.

I took the last speaker slot for the day. I think lots of brains were soft by then, and a few people had already started to drop off. I talked for a while generically about how the real-time problem (or perhaps low-latency) is being handled with Linux these days and explained a bit about PREEMPT_RT and full dynamic ticks and what the differences of the methods are.

At 20:00 we forced everyone out of the facilities. A small team of us grabbed a bite and a couple of beers to digest the day and to yap just a little bit more before we split up for the evening and took off home…

Thank you everyone who was there for making it another great event. Thank you all speakers for giving the event the extra brightness! Thank you Enea for sponsoring, hosting and providing all the goodies in such an elegant manner! It is indeed possible that we make a 3rd embedded hacking day in the future…

Okay, so here are the correct answers to the embedded hacking #2 contest (click for larger pictures):

The fact that you get the clues as hexadecimal uppercase ASCII was pretty quickly clear to everybody. I found it interesting to hear how people attacked the problem of decoding the hex into letters. Most people seem to have made a lookup-table fairly soon, and at least one contestant I talked to made a mistake in his table that turned W into X instead! This year’s winner did the conversion completely without a written down table…

So all the pieces are decoded like this:

Of course, now a pedant would argue that FORK() isn’t correct, but I decided to use all uppercase just to make the conversion slightly easier. At least I think converting only uppercase ASCII as hex is easier. So the question is “What does fork() return in the child process?”

The answer to the question is 0 (zero). Short and simple. See fork’s man page.

After 13 minutes and 20 seconds since I clicked start on the timer, Linus Nielsen Feltzing approached me with a little note with the correct answer and we had a winner!

The very happy Linus was very disappointed in the previous competition when he was very close to winning but was beaten just within seconds by last time’s winner.

Now, the Chromebook that Enea donated to the winner of the contest was handed over to Linus. (The Samsung Cortex-A15 version.)

![]() I created another contest for the Embedded hacking event we just pulled off again, organized with foss-sthlm and Enea. Remember that I made one previously at our former hacking day?

I created another contest for the Embedded hacking event we just pulled off again, organized with foss-sthlm and Enea. Remember that I made one previously at our former hacking day?

The lesson from that time was that the puzzle ingredient then was slightly too difficult so people had to work a bit too long. It made many people give up and the ones who didn’t had to spend a significant time on solving it.

This time, I decided to use the same basic principle: ask N questions that all provide hints for the (N+1)th question, so that the first one to give me the answer to that final question is the winner. It makes it very easy for me to judge and it is a rather neat competition style game. I decided 10 questions should be enough.

To reduce some of the complexity from last time, I decided to provide the individual clues in the correct chronological order but instead add another twist: they aren’t in plain text! But since they’re chronological, the participants can go back and quite “easily” try other alternatives if there are some strange words appearing in the output. I made sure that all alternatives always have fine English alternatives so that if you pick the wrong answer it might still sound or look like English for a while…

I was very happy to see over 30 persons in the room that decided to accept the challenge. I suspect the prize did its part in attracting people to give it a go.

The rules in slightly longer terms as I put them (click it to see a higher resolution version):

And I clarified how the questions work:

I then started my timer, and I showed all the questions on the projector to everyone. I gave them around 40 seconds per question. It thus took almost seven minutes to go through them and then I left a final slide up showing all questions:

To allow readers to give this contest a go first before checking the answers. See the full answer and explanation.

In this little piece I’ll explain why there won’t be any version 8 of curl and libcurl in a long time. I won’t rule out that it might happen at some point in the future. Just that it won’t happen anytime soon and explain the reasons why.

In this little piece I’ll explain why there won’t be any version 8 of curl and libcurl in a long time. I won’t rule out that it might happen at some point in the future. Just that it won’t happen anytime soon and explain the reasons why.

We’ve done 29 minor releases and many more patch releases since version seven was born, on August 7 2000. We did in fact bump the ABI number a couple of times so we had the chance of bumping the version number as well, but we didn’t take the chance back then and these days we have a much harder commitment and determinism to not break the ABI.

There’s really no particular downside with having a minor version 29. Given our current speed and minor versioning rules, we’ll bump it 4-6 times/year and we won’t have any practical problems until we reach 256. (This particular detail is because we provide the version number info with the API using 8 bits per major, minor and patch field and 8 bits can as you know only hold values up to 255.) Assuming we bump minor number 6 times per year, we’ll reach the problematic limit in about 37 years in the fine year 2050. Possibly we’ll find a reason to bump to version 8 before that.

Prepare yourself for seven point an-increasingly-higher-number for a number of years coming up!

Yes!

We have a compatibility within the ABI number so that a later version always work with a program built to use the older version. We have several hundred million users. That means an awful lot of programs are built to use this particular ABI number. Changing the number has a ripple effect so that at some point in time a new version has to replace all the old ones and applications need to be rebuilt – and at worst also possibly have to be rewritten in parts to handle the ABI/API changes. The amount of work done “out there” on hundreds or thousands of applications for a single little libcurl tweak can be enormous. The last time we bumped the ABI, we got a serious amount of harsh words and critical feedback and since then we’ve gotten many more users!

Yes in theory they do, but in practice they don’t.

If you build applications they have the ABI number stored for which lib to use, so if you just keep the different versions of the libraries installed in the file system you’ll be fine. Then the older applications will keep using the old version and the ones you rebuild will be made to use the new version. Everything is fine and dandy and over time all rebuilt applications will use the latest ABI and you can delete the older version from the system.

In reality, libraries are provided by distributions or OS vendors and they ship applications that link to a specific version of the underlying libraries. These distributions only want one version of the lib, so when an ABI bump is made all the applications that use the lib will be rebuilt and have to be updated.

If we would find ourselves cornered without ability to continue development without a bump then of course we would take the pain it involves. But as things are right now, we have a few things we don’t really like with the current API and ABI but in general it works fine and there’s no major downsides or great pains involved. We simply do not have any particularly good reason to bump version number or ABI version. Things work pretty good with the current way.

The future is of course unknown and at some point we’ll face a true limitation in the API that we need to bridge over with a bump, but it can also take a long while until we hit that snag.

Update April 6th: this article has been read by many and I’ve read a lot of comments and some misunderstandings about it. Here’s some additional clarifications:

I think github is a lovely resource for collaborating on source code with my friends all over the globe. Among other things, we host the primary curl repository there and we’ve been doing so for almost three years now. This experience has led me to discover a bunch of things I miss in the service…

github is clearly aimed at repositories run by one person or a small set of persons, while in the projects I run I try to involve as many as possible in wide collaboration and I put efforts into informing everyone to get the widest possible attention and feedback. I may have created the account and “own” the repository, but I want the work to be done by a large team and I want everything that happens to it to be seen by a large audience. This is not always possible to do easily with the existing github services.

To further this spirit and to widen cooperation more, I would like to see the following improvements:

I realize GitHub has features that offer me to create an “organization” to host a repository instead of it being owned by me as a person, but I don’t think that should be a requirement to get this functionality. And I don’t know if GitHub truly offers better group functionality then either.

A while ago Sourceforge gave me the offer to upgrade curl’s bug tracker to “the new one” they offer. They do offer some arguments to why you would want to do this but they don’t elaborate much on the transition for existing projects. Since I’ve been annoyed and disappointed on the old one for years I decided to dive right in. I decided to post this blog entry to possibly encourage others as well, or at least explain how upgrading worked for us.

I’ll start by explaining a bit about what’s so bad about the old Sourceforge bug tracker. Anyone who has tried to use it for anything “real” most likely already know about these things and then I figure my list can be used for a comparison if we’ve gotten annoyed by the same things.

Annoying things with the new tracker:

Summary: the upgrade was totally worth it. A much better bug tracker with much more useful interfaces, both the web interface and the ability to respond to it by email etc. And still room for improvements!

libcurl internals suddenly become a lot cleaner and neater to work with when we made all code assume and work with the multi interface!

libcurl was initially created slightly after the birth of the curl tool. After the tool started to get some traction and use out in the world, requests and queries about a library with its powers started to drop in. Soon enough, in the year 2000 we shipped the first release of libcurl and it featured a synchronous API (the “easy” interface) that performs the complete operation and then returns. I think we can now say that the blocking easy interface was successful and its ease of use has been very popular and appreciated by many users.

During 2002 the need for a non-blocking API had been identified and we introduced the multi interface. The multi interface is kind of a super-set as it re-uses the same handles as is used with the easy interface, so it cleverly makes it fairly easy for a standard application to move from the easy interface to the multi.

Basically since that day, we’ve struggled in the source code structure to handle the fact that we have both a blocking and a non-blocking API. In lots of places we’ve had different code paths and choices done depending on which API that was used. It made the source code hard to follow and it occasionally introduced hard to track bugs which could lead to the multi and easy interface not behaving the same way to the underlying network or protocol behavior. It was clear very early on that it wasn’t an ideal design choice, but it was a design choice that was spread out among the code and it stuck.

During November 2012 I finally took on the code that we’ve had #ifdef’ed since around 2005 which makes the blocking easy interface operation a wrapper function around the non-blocking multi interface functions. Using this method, all internals should be considered non-blocking and there is no need left to treat things differently depending on which API that was used because everything is now multi interface == non-blocking.

On January 17th 2013 the big patch was committed. 400 added lines, 800 removed over 54 modified files…

So what did happen in the curl project during 2012?

We shipped 6 releases with 199 identified bug fixes and some 40 other changes. That makes on average 33 bug fixes shipped every 61st day or a little over one bug fix done every second day. All this done with about 1000 commits to the git repository, which is roughly the same amount of git activity as 2010 and 2011. We merged commits from 72 different authors, which is a slight increase from the 62 in 2010 and 68 in 2011.

On our main development mailing list, the curl-library list, we now have 1300 subscribers and during 2012 it got about 3500 postings from almost 500 different From addresses. To no surprise, I posted by far the largest amount of mails there (847) with the number two poster being Günter Knauf who posted 151 times. Four more members posted more than 100 times: Steve Holme (145), Dan Fandrich (131), Marc Hoersken (130) and Yang Tse (107). Last year I sent 1175 mails to the same list…

I’ve walked through the biggest changes and fixes and here are the particular ones I found stood out during this otherwise rather calm and laid back curl year. Possibly in a rough order of importance…

I won’t promise that any of these will happen during 2013 but I can promise there will be efforts…

I wrote a separate post a short while ago about the HTTP2 progress, and I expect 2013 to bring much more details and discussions in that area. Will we get SRV record support soon? Or perhaps even URI records? Will some of the recent discussions about new HTTP auth schemes develop into something that will reach the internet in the coming year?

In libcurl we will switch to an internal design that is purely non-blocking with a lot of if-then-that-else source code removed for checks which interface that is used. I’ll make a follow-up post with details about that as well as soon as it actually happens.

curl and libcurl are considered pillars in the internet world by now. This year I’ve heard from several places by independent sources how people consider support by curl to be an important driver for internet technology. As long as we don’t have it, it hasn’t really reached everyone and that things won’t get adopted for real in the Internet community until curl has it supported. As father of the project it makes me proud and humble, but I also feel the responsibility of making sure that we continue to do the right thing the right way.

I also realize that this position of ours is not automatically glued to us, we need to keep up the good stuff to make it stick.