(older options of the week)

-U, --proxy-user

The short version of this option uses the uppercase letter ‘U’. It is important since the lower case letter ‘u’ is used for another option. The longer form of the option is spelled --proxy-user.

This command option existed already in the first ever curl release!

The man page‘s first paragraph describes this option as:

Specify the user name and password to use for proxy authentication.

Proxy

This option is for using a proxy. So let’s first briefly look at what a proxy is.

A proxy is a “middle man” in the communication between a client (curl) and a server (the one that holds the contents you want to download or will receive the content you want to upload).

The client communicates via this proxy to reach the server. When a proxy is used, the server communicates only with the proxy and the client also only communicates with the proxy:

curl <===> proxy <===> server

There exists several different types of proxies and a proxy can require authentication for it to allow it to be used.

Proxy authentication

Sometimes the proxy you want or need to use requires authentication, meaning that you need to provide your credentials to the proxy in order to be allowed to use it. The -U option is used to set the name and password used when authenticating with the proxy (separated by a colon).

You need to know this name and password, curl can’t figure them out – unless you’re on Windows and your curl is built with SSPI support as then it can magically use the current user’s credentials if you provide blank credentials in the option: -U :.

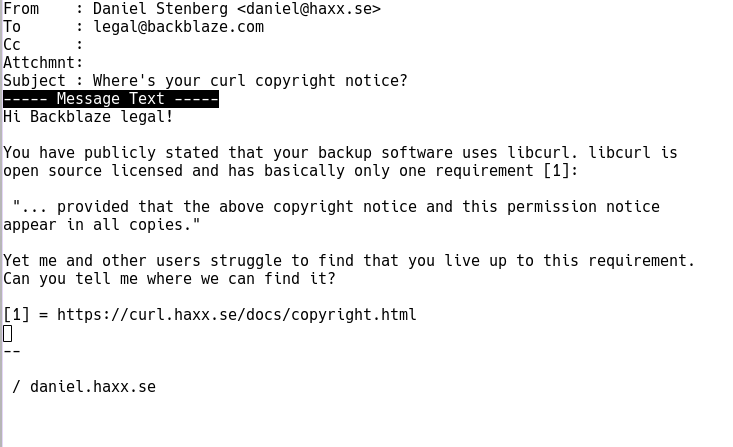

Security

Providing passwords in command lines is a bit icky. If you write it in a script, someone else might see the script and figure them out.

If the proxy communication is done in clear text (for example over HTTP) some authentication methods (for example Basic) will transmit the credentials in clear text across the network to the proxy, possibly readable by others.

Command line options may also appear in process listing so other users on the system can see them there – although curl will attempt to blank them out from ps outputs if the system supports it (Linux does).

Needs other options too

A typical command line that use -U also sets at least which proxy to use, with the -x option with a URL that specifies which type of proxy, the proxy host name and which port number the proxy runs on.

If the proxy is a HTTP or HTTPS type, you might also need to specify which type of authentication you want to use. For example with --proxy-anyauth to let curl figure it out by itself.

If you know what HTTP auth method the proxy uses, you can also explicitly enable that directly on the command line with the correct option. Like for example --proxy-basic or --proxy-digest.

SOCKS proxies

curl also supports SOCKS proxies, which is a different type than HTTP or HTTPS proxies. When you use a SOCKS proxy, you need to tell curl that, either with the correct prefix in the -x argument or by with one of the --socks* options.

Example command line

curl -x http://proxy.example.com:8080 -U user:password https://example.com

See Also

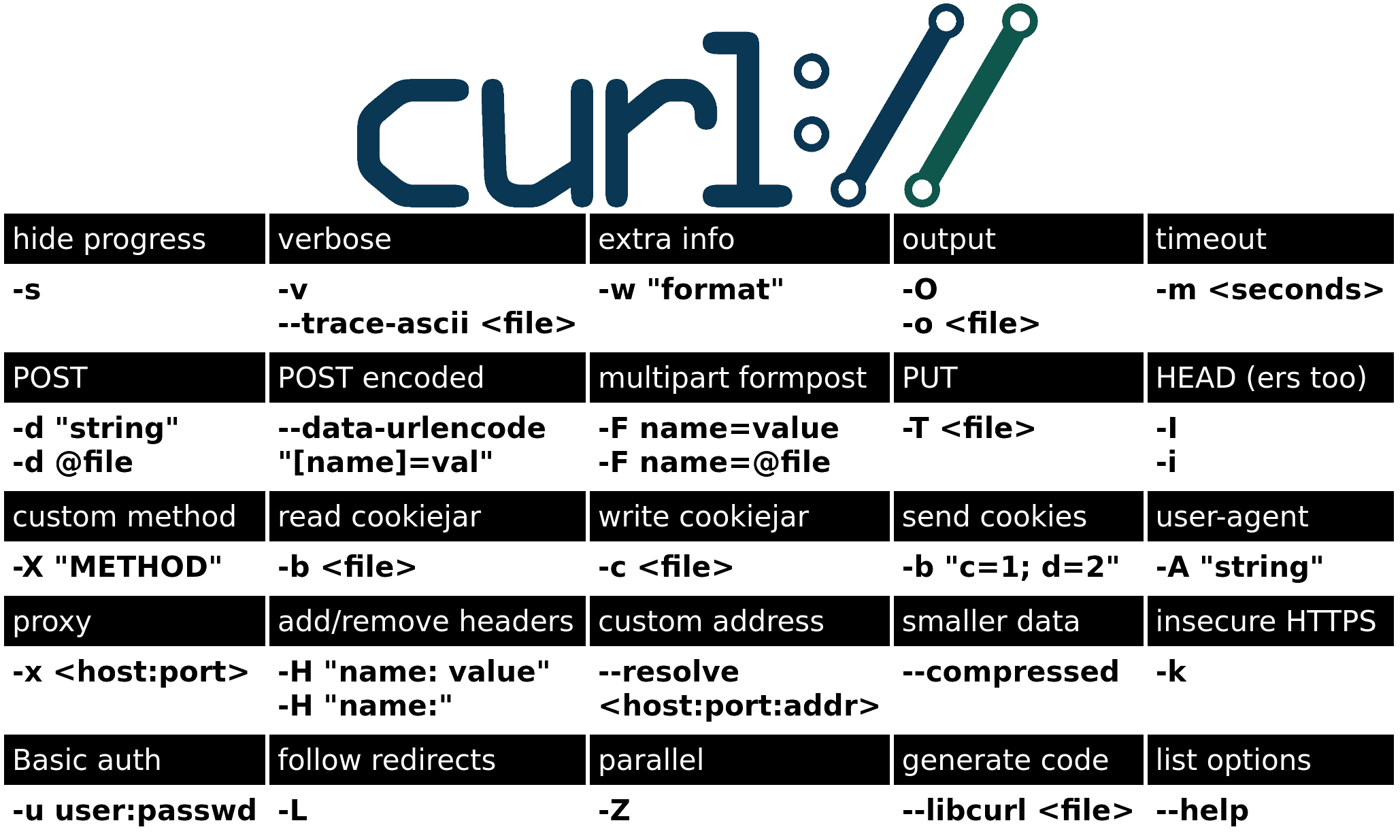

The corresponding option for sending credentials to a server instead of proxy uses the lowercase version: -u / --user.