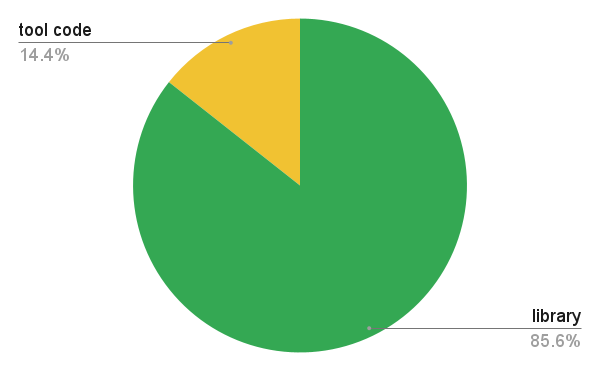

The curl project has been alive for decades. We gradually introduce new features and options into the command line tool and library over time and we work hard never to break existing behavior and keep the ABI and API stable.

Still, some features and functionalities go out of style sooner or later. Versions get deprecated, third party dependencies go stale and turn unsuitable for use.

How to discard “dead branches”

I like the mental image of curl as a big flourishing tree, with roots, the main trunk, branches and a multitude of thick green leaves.

Every once in a while some leaves or branches die. They turn all brown and dry and we need to do something about them. We trim the tree.

Dependencies go sour

A few times during curl’s life-time we have found ourselves in a position where we supported a third-party dependency for some functionality, but that library was maybe no longer a product we want to recommend our users to actually lean on. For libraries that aren’t being maintained correctly or that fall behind in other aspects and we are made aware of that fact, we need to make a decision.

To keep supporting the library, we indirectly give it our blessing. Products that no longer get updates or we no longer trust are keeping up with the world, we need to “chop off” from the curl family tree. We have done so with a few TLS libraries over the years for example. Users that want to keep doing TLS powered protocols with curl and libcurl then “just” have to switch to a supported TLS library.

We don’t have any special mechanisms or policies to detect this kind of expired products, but we simply have to use our judgement and do the best we can.

Protocol versions go extinct

The most obvious example here are SSL and TLS versions. Back in 1998 there actually existed servers that supported SSL version 2. Since that day, all SSL versions have been phased out from the internet and several TLS versions have. I doubt we have seen the last deprecated protocol version so more are likely to happen going forward.

In curl we follow the internet transfer ecosystem and in many cases we get told by the TLS libraries what curl can and cannot support. The options that we once added that ask for certain specific protocol versions thus no longer actually work for most system installs. This is rarely a problem because even if users could ask for a really old TLS version to be attempted, rarely any server side is actually supporting those so this usually isn’t a cause for concern.

For the most desperate users in niche situations, they can usually go build their own versions of the TLS libraries and re-enable the deprecated versions if they really need.

We might give up on features

Sometimes we add features to libcurl for stuff that then over time never really gets used or work correctly. This can happen because the Internet world decided that the particular feature isn’t cool or even because it doesn’t work perfectly in the curl architecture. In cases where we can then remove the feature without breaking the ABI, like when the option the user sets asks for something to get used if possible, things are good. This is for example how we could remove support for HTTP/1 Pipelining from curl without breaking any promises.

Unused platforms erode

The source code for curl and libcurl is written to be extremely portable and has reportedly run on at least 86 different operating systems at some point. Platforms come and go, and popularity and support for them go up and down over time. We might add support for a popular platform one year, which later, fifteen years or so down the line is basically never used or heard of any longer. Code for platforms that we never build or verify slowly but very surely “rots” and will no longer be possible to build.

After a certain number of years without attention, the cost of keeping the code around that presumably does not work anyway, gets higher than the value of keeping it around. If then nobody raises their hand and says they are in fact using curl on platform ZZ we might rip out the adjustments we have in the code for that platform. We can always bring back support if someone would suddenly emerge with such a desire and plan.

In practical terms

When we figure out that there’s something in the project we think should be deprecated. A feature, a backend, something, we bring it up for discussion on the libcurl mailing list and we add it to the docs/DEPRECATE.md document, to be removed no earlier than six months later. This way, users can check this document and get informed about these plans in several releases ahead.

If someone would object to a particular deprecation plan, we would take that discussion and possibly reconsider or delay the deprecation plans.

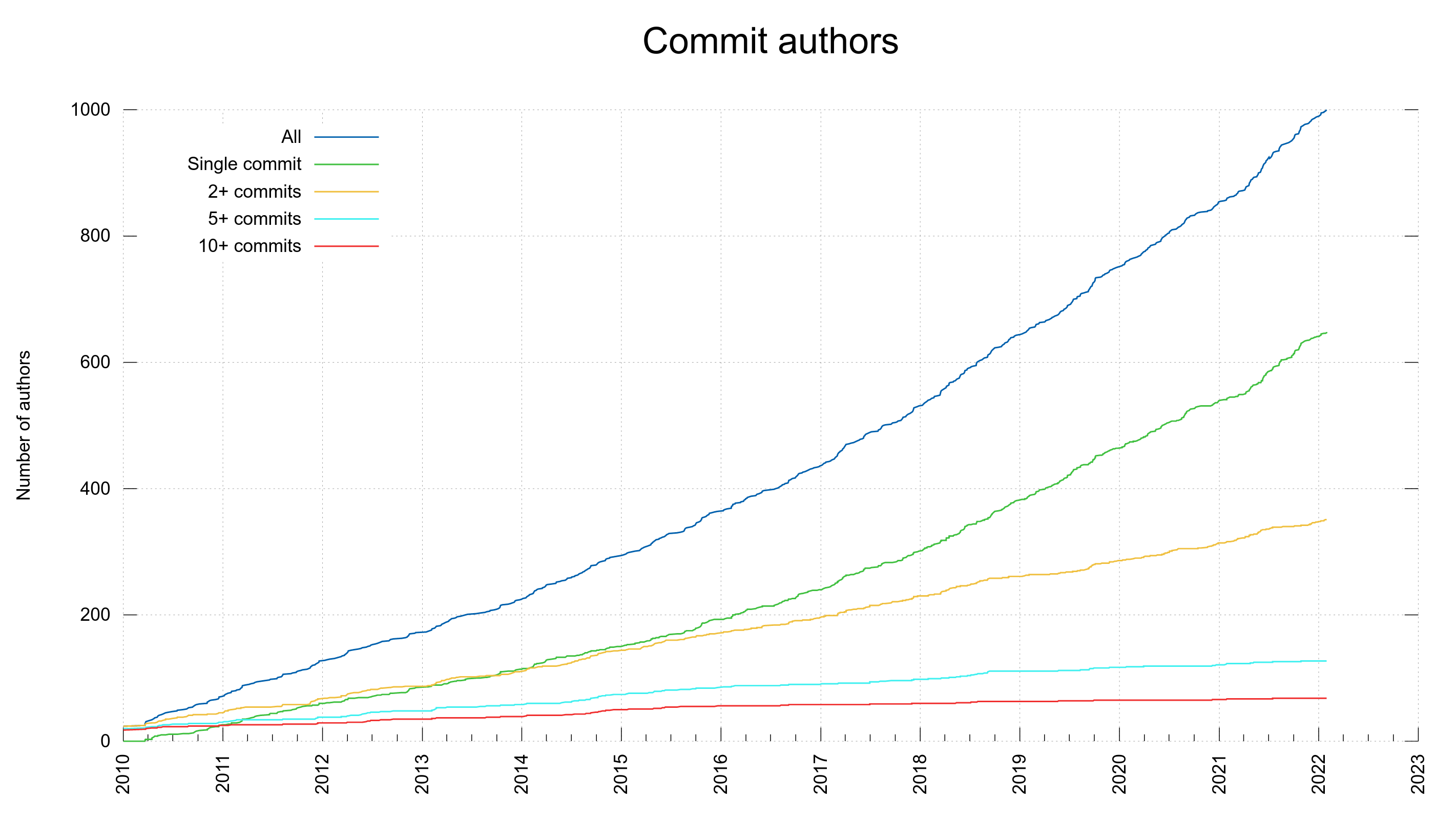

Credits

Top image by Ron Porter from Pixabay

Tree image by OpenClipart-Vectors from Pixabay