At March 5 2013 we had another foss-sthlm meetup. The 12th one in fact, and out of the five talks during the event I spoke about curl and new technologies. Here are the slides from my talk:

Category Archives: cURL and libcurl

curl and/or libcurl related

Now 1K+ awesome contributors

On March 20 1998 the first curl release shipped – but as it was built on a previous project there was already a handful of contributors. It was then just a modest little project with but a few thousand lines of code in total.

As time has passed, the project has grown and development has been going on at a rapid speed in a never-ending cycle of releases and bug fixes. The first couple of years I didn’t keep track of every single contributor properly, but one day in 2005 I decided to go back and try to collect the names of all the helpers so far. Names that had been mentioned in the changelogs and comments etc. When looking at our history, it is therefore not really sensible to look at the numbers before that cut-off date as I didn’t keep the count and logs properly before then.

All since then, I make an effort in properly giving credit to all contributors: patch producers, bug reporters, documentation writers as well as people “just” providing good advice. I try to mention all contributors. To give credit where credit is due. In a volunteer driven project, that is after all the best compensation I can offer.

Looking back over the years it seems the pace of newcomers have been quite steady. The 437 names in 2005 have grown to 1005 in less than eight years. Roughly 70 new contributors every year or 6 per month.

Now, in February 2013 with the release of 7.29.0, we surpassed the magic 1000 named contributors limit in the project. 1000 contributors in about 5437 days. On average one new soul every 5th day over a period of almost 15 years. Fascinatingly enough, even if I count a more recent period like the last 6 years the pace is only a little faster with one new just a little faster than every 5 days!

I originally planned to present the data as a graph in this post, but since the development has been so extremely linear it turned out so boring I scrapped it! Instead I’ll show you the top-10 most common first names among curl contributors:

- 23 David

- 14 Peter

- 14 John

- 13 Michael

- 11 Eric

- 10 Mark

- 9 Tom

- 9 Tim

- 9 Daniel

- 9 Chris

The names that didn’t quite make it and that all exist 8 times in the THANKS file are Steve, Robert, Richard, James, Dan, Christian, Andrew and Andreas. As I’ve mentioned before: not that many females in there.

(Yes, I’ve ignored that possibly some Daniels go by the name of Dan, some Chrises might be Christian and so on, I’ve only counted the actual names people have used.)

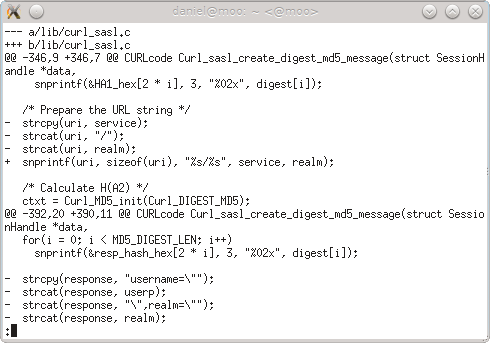

Why the latest security vulnerability in curl happened

In the end of January 2013 we got a fresh security vulnerability pointed out to us in the curl project (it was publicly announced on Feb 6). Another buffer overflow. This time in the SASL Digest-MD5 handling for POP3, IMAP and SMTP. It is the 16th security flaw during curl’s life-time of almost 15 years so it isn’t a disaster but still of course it is never fun when it happens. I put a lot of my own effort and pride into this project so every time something like this floats to the surface my pride and self-esteem get damaged a bit.

Everyone who’s concerned about open source and security and foremost in a reliable and secure libcurl of course now wonders: how did this happen? How could this piece of security problem get into libcurl and what are we doing to make sure it doesn’t happen again?

Let me tell you the story. It is not as interesting nor full of conspiracies as you’d like. It is instead rather dull and boring but nevertheless the truth.

I’m the lead developer and maintainer of curl and libcurl. I personally have done some 65% of all commits in the project and I do the majority of all code reviews on the mailing list. Our code might be used by some 500 million users, but the number of regulars that can be considered the “core team” can still basically be counted on a single hand. Also, we all do this primarily on our spare time.

During intense development periods we get flooded by bug reports and patch submissions and my backlog grows. It’s really not possible to foresee when these periods come, but occasionally it seems the planets align in this way and work piles up.

In order to then proceed the best way in the project, I try to focus on the architectural and “deep” matters that need me and my particular knowledge most. I then try to leave the “easy” problems that are easier to work on to others, and I try to stay away from the issues that already seem to be under control by some of the existing regulars in the project. I also have to let other “elders” in the project push things with slightly less scrutiny just to be able to plow through the work better. Unfortunately this leads to the occasional flaw getting through and in this case it was even a security vulnerability that when you look back on the code you really cannot understand how we could miss this.

We do take security seriously though and we make a big effort on handling all security reports swiftly and accurately. Even if this was the 16th time we let our guard down, I want to think that we at least react responsibly and in a good way when we realize our mistakes.

Please don’t judge us due to this. Please instead consider joining us and help us review code and help us find the next flaw before we merge it into mainline or at least before we do a public release with the code!

curl and libcurl 7.29.0

As a representative for the team behind curl and libcurl, we’re of course proud to yet again having shipped a release to the public today. Over 240 commits, with in total almost 10000 lines added and 6000 removed since the previous release in November 2012. We’re only a month away until the curl project turns 15 years old.

Some highlights this time include:

- We fixed a nasty overflow vulnerability we have been shipping in a few previous releases. The flaw existed in code used by IMAP, POP3 and SMTP.

- We introduced a new test suite output mode that is “automake compliant”. This can help linux distros and others who want to run many test suites and have a unified way of parsing the results and outcome. It follows the spirit of ptest and I believe it will be used in the future.

- The IMAP support got a lot of improvements and lots of login and authentication fixes were brought in. Now libcurl supports the sasl methods digest-md5, cram-md5, ntlm and login., and it also recognizes the login disabled server capability.

- Architecture wise, we remodeled the internals quite a lot and made it “always-multi“. This improves readability and internal complexity and is all just goodness. The short-term downside is possibly the risk for a temporary increase in bug reports due to this…

- 35 specified bug fixes were crammed in as well, and there are a bunch more we haven’t mentioned that just “silently” improved the multi interface functionality.

the new bug tracker on sourceforge

A while ago Sourceforge gave me the offer to upgrade curl’s bug tracker to “the new one” they offer. They do offer some arguments to why you would want to do this but they don’t elaborate much on the transition for existing projects. Since I’ve been annoyed and disappointed on the old one for years I decided to dive right in. I decided to post this blog entry to possibly encourage others as well, or at least explain how upgrading worked for us.

I’ll start by explaining a bit about what’s so bad about the old Sourceforge bug tracker. Anyone who has tried to use it for anything “real” most likely already know about these things and then I figure my list can be used for a comparison if we’ve gotten annoyed by the same things.

- They use a global bug id which makes all bug entries get very large numbers that aren’t in sequence and are fairly hard to remember.

- You can’t respond to bug reports by mail, so you are forced to use the heavy ad-filled web site.

- Ridiculous URLs to the bug tracker and each individual bug entry. I created a bounce CGI years ago on the curl web site to avoid having to use the overly long ones anywhere.

- When sending out email notifications, it prepends the new comments while having the older ones below which basically is an odd-order top-posting style a lot of people and projects have a hard time to get accustomed to.

- All the existing bug tracker entries were converted. They all now get numbered sequentially in a private number series so no more bug #31234234 and instead the 1100 or so bug reports became bug #1 to bug #1169.

- The new bug entries have a different set of meta-data but the ‘status’ and ‘owner’ etc seemed to get translated pretty good. The new ‘milestone’ got populated wrongly for me, but it didn’t matter much to me because I simply cleared it.

- There’s no visible way to translate from old style bug numbers to the new bug numbers. When I go to the URL for the old number it redirects me to the new bug so clearly sourceforge has created a look-up table it can use.

- There’s now a sensible public URL to point out the “home” for the curl bug tracking.

Annoying things with the new tracker:

- It splits up a the comments to a single report into several “pages” far too early and forces you to click through annoying “page 2” or “next page” links to see the latest comments.

Summary: the upgrade was totally worth it. A much better bug tracker with much more useful interfaces, both the web interface and the ability to respond to it by email etc. And still room for improvements!

internally, we’re all multi now!

libcurl internals suddenly become a lot cleaner and neater to work with when we made all code assume and work with the multi interface!

libcurl was initially created slightly after the birth of the curl tool. After the tool started to get some traction and use out in the world, requests and queries about a library with its powers started to drop in. Soon enough, in the year 2000 we shipped the first release of libcurl and it featured a synchronous API (the “easy” interface) that performs the complete operation and then returns. I think we can now say that the blocking easy interface was successful and its ease of use has been very popular and appreciated by many users.

During 2002 the need for a non-blocking API had been identified and we introduced the multi interface. The multi interface is kind of a super-set as it re-uses the same handles as is used with the easy interface, so it cleverly makes it fairly easy for a standard application to move from the easy interface to the multi.

Basically since that day, we’ve struggled in the source code structure to handle the fact that we have both a blocking and a non-blocking API. In lots of places we’ve had different code paths and choices done depending on which API that was used. It made the source code hard to follow and it occasionally introduced hard to track bugs which could lead to the multi and easy interface not behaving the same way to the underlying network or protocol behavior. It was clear very early on that it wasn’t an ideal design choice, but it was a design choice that was spread out among the code and it stuck.

During November 2012 I finally took on the code that we’ve had #ifdef’ed since around 2005 which makes the blocking easy interface operation a wrapper function around the non-blocking multi interface functions. Using this method, all internals should be considered non-blocking and there is no need left to treat things differently depending on which API that was used because everything is now multi interface == non-blocking.

On January 17th 2013 the big patch was committed. 400 added lines, 800 removed over 54 modified files…

The curl year 2012

So what did happen in the curl project during 2012?

First some basic stats

We shipped 6 releases with 199 identified bug fixes and some 40 other changes. That makes on average 33 bug fixes shipped every 61st day or a little over one bug fix done every second day. All this done with about 1000 commits to the git repository, which is roughly the same amount of git activity as 2010 and 2011. We merged commits from 72 different authors, which is a slight increase from the 62 in 2010 and 68 in 2011.

On our main development mailing list, the curl-library list, we now have 1300 subscribers and during 2012 it got about 3500 postings from almost 500 different From addresses. To no surprise, I posted by far the largest amount of mails there (847) with the number two poster being Günter Knauf who posted 151 times. Four more members posted more than 100 times: Steve Holme (145), Dan Fandrich (131), Marc Hoersken (130) and Yang Tse (107). Last year I sent 1175 mails to the same list…

Notable events

I’ve walked through the biggest changes and fixes and here are the particular ones I found stood out during this otherwise rather calm and laid back curl year. Possibly in a rough order of importance…

- We started the year with two security vulnerability announcements, regarding an SSL weakness and an injection flaw. They were reported in 2011 though and we didn’t get any further security alerts during 2012 which I think is good. Or a sign that nobody has been looking close enough…

- We got two interesting additions in the SSL backend department almost simultaneously. We got native Windows support with the use of the schannel subsystem and we got native Mac OS X support with the use of Darwin SSL. Thanks to these, we can now offer SSL-enabled libcurls on those operating systems without relying on third party SSL libraries.

- The VERIFYHOST debacle took off with “security researchers” throwing accusations and insults, ending with us releasing a curl release with the bug removed. It did however unfortunately lead to some follow-up problems in for example the PHP binding.

- During the autumn, the brokeness of WSApoll was identified, and we now build libcurl without it and as a result libcurl now works better on Windows!

- In an attempt to allow libcurl-using applications to avoid select() and its problems, we introduced the new public function curl_multi_wait. It avoids the FD_SETSIZE limit and makes it harder to screw up…

- The overly bloated User-Agent string for the curl tool was dramatically shortened when we cut out all the subsystems/libraries and their version numbers from the string. Now there’s only curl and its version number left. Nice and clean.

- In July we finally introduced metalink support in the curl tool with the curl 7.27.0 release. It’s been one of those things we’ve discussed for ages that finally came through and became reality.

- With the brand new HTTP CONNECT support in the test suite we suddenly could get much improved test cases that does SSL or just tunnel through an HTTP proxy with the CONNECT request. It of course helps us avoid regressions and otherwise improve curl and libcurl.

What didn’t happen

- I made an attempt to get the spindly hacking going, but I’ve mostly failed with that effort. I have personally not had enough time and energy to work on it, and the interest from the rest of the world seems luke warm at best.

- HTTP pipelining. Linus Nielsen Feltzing has a patch series in the works with a much improved pipelining support for libcurl. I’ll write a separate post about it once it gets in. Obviously we failed to merge it before the end of the year.

- Some of my friends like to mock me about curl not being completely IPv6 friendly due to its lack of support for Happy Eyeballs, and of course they’re right. Making curl just do two connects on IPv6-enabled machines should be a fairly small change but yet I haven’t yet managed to get into actually implementing it…

- DANE is SSL cert verification with records from DNS thanks to DNSSEC. Firefox has some experiments going and Chrome already supports it. This is a technology that truly can improve HTTPS going forwards and allow us to avoid the annoyingly weak and broken CA model…

I won’t promise that any of these will happen during 2013 but I can promise there will be efforts…

The Future

I wrote a separate post a short while ago about the HTTP2 progress, and I expect 2013 to bring much more details and discussions in that area. Will we get SRV record support soon? Or perhaps even URI records? Will some of the recent discussions about new HTTP auth schemes develop into something that will reach the internet in the coming year?

In libcurl we will switch to an internal design that is purely non-blocking with a lot of if-then-that-else source code removed for checks which interface that is used. I’ll make a follow-up post with details about that as well as soon as it actually happens.

Our Responsibility

curl and libcurl are considered pillars in the internet world by now. This year I’ve heard from several places by independent sources how people consider support by curl to be an important driver for internet technology. As long as we don’t have it, it hasn’t really reached everyone and that things won’t get adopted for real in the Internet community until curl has it supported. As father of the project it makes me proud and humble, but I also feel the responsibility of making sure that we continue to do the right thing the right way.

I also realize that this position of ours is not automatically glued to us, we need to keep up the good stuff to make it stick.

HTTP2, SPDY and spindly right now

On November 28, the HTTPbis group within the IETF published the first draft for the upcoming HTTP2 protocol. What is being posted now is a start and a foundation for further discussions and changes. It is basically an import of the SPDY version 3 protocol draft.

On November 28, the HTTPbis group within the IETF published the first draft for the upcoming HTTP2 protocol. What is being posted now is a start and a foundation for further discussions and changes. It is basically an import of the SPDY version 3 protocol draft.

There’s been a lot of resistance within the HTTPbis to the mandated TLS that SPDY has been promoting so far and it seems unlikely to reach a consensus as-is. There’s also been a lot of discussion and debate over the compression SPDY uses. Not only because of the pre-populated dictionary that might already be a little out of date or the fact that gzip compression consumes a notable amount of memory per stream, but also recently the security aspect to compression thanks to the CRIME attack.

Meanwhile, the discussions on the spdy development list have brought up several changes to the version 3 that are suggested and planned to become part of the version 4 that is work in progress. Including a new compression algorithm, shorter length fields (now 16bit) and more. Recently discussions have brought up a need for better flexibility when it comes to prioritization and especially changing prio run-time. For like when browser users switch tabs or simply scroll down the page and you rather have the images you have in sight to load before the images you no longer have in view…

I started my work on Spindly a little over a year ago to build a stand-alone library, primarily intended for libcurl so that we could soon offer SPDY downloads for it. We’re still only on SPDY protocol 2 there and I’ve failed to attract any fellow developers to the project and my own lack of time has basically made the project not evolve the way I wanted it to. I haven’t given up on it though. I hope to be able to get back to it eventually, very much also depending on how the HTTPbis talk goes. I certainly am determined to have libcurl be part of the upcoming HTTP2 experiments (even if that is not happening very soon) and spindly might very well be the infrastructure that powers libcurl then.

We’ll see…

libcurl claimed to be dangerous

On October 24th, my twitter feed suddenly got more activity than usual when suddenly there’s a mention of a newly(?) published paper:

The most dangerous code in the world: validating SSL certificates in non-browser software

Within the twelve page document they discuss flaws in various APIs and other certificate checking software, and for libcurl they say:

Internally, it uses OpenSSL to verify the chain of trust and verifies the hostname itself. This functionality is controlled by parameters CURLOPT_SSL_VERIFYPEER (default value: true) and CURLOPT_SSL_VERIFYHOST (default value: 2). This interface is almost perversely bad. The VERIFYPEER parameter is a boolean, while a similar-looking VERIFYHOST parameter is an integer.

(The fact that libcurl supports no less than nine(!) different SSL library backends seems to have been ignored but is irrelevant.)

The final part is their focus. It is an integer option but it looks like it could be similar to the VERIFYPEER option which could be considered a boolean option – but note that there is no boolean options at all in libcurl, those are all “long” values. They go on to explain:

Well-intentioned developers not only routinely misunderstand these parameters, but often set CURLOPT_SSL_VERIFY HOST to TRUE, thereby changing it to 1 and thus accidentally disabling hostname verification with disastrous consequences

They back up their claim with some snippets from PHP programs showing wrong use in chapter 7.

What did the authors do to try to fix the problem before posting rude comments in a report? Nothing. At. All. They could’ve emailed, tweeted or posted a bug report or patch but none of that happened.

They also only post examples of the bad use made by PHP code. The PHP code uses the PHP/CURL binding and a change could easily be done in the PHP binding. I don’t know PHP internals, but perhaps the option could be made to not accept a boolean value instead of a numerical there.

We’re now discussing this topic on the libcurl mailing list. If you have ideas or suggestions or just comments, feel free to join in!

Oh, and I feel that my recent blog post on the non-verifying users seems related and relevant.

I will also call the majority of all these suddenly appearing complainers on this API to be mostly hypocrites since the API has been established and working like this for over a 10 (ten!) years and not a single person has objected to it before. Joining up on the “bandwagon” now and calling the API stupid or silly is… well, I’d call it “non-intelligent behavior”. In libcurl we take a stable and solid API and ABI very seriously. We simply do not break API nor ABI unless forced brutally into a corner we can’t escape otherwise. Therefore we have kept this API to keep existing applications functional.

Update: the discussion thread on the topic from the PHP-DEV list. Thanks to Jan Ehrhardt.

Second update: we shipped libcurl 7.28.1 on November 20 2012, and it no longer accepts the value 1 to VERIFYHOST, but will instead cause curl_easy_setopt() return an error and use the default value (which is 2). This will prevent applications to accidentally be insecure due to use of 1.

WSAPoll is broken

Microsoft admits the WSApoll function is broken but won’t do anything about it. Unless perhaps if customers keep nagging them.

Doing portable socket programming has always meant using a bunch of #ifdefs and similar. A program needs to be built on many systems and slowly get adjusted to work really well all over. For ages, for example, Windows only supported select() and not poll() while all sensible systems[*] out there supported poll(). There are several reasons to prefer poll to select when writing code.

Then one day in 2006, Chad Charlin, a developer at Microsoft wrote the following when talking about the new WSApoll() function they introduced in Windows Vista:

Among the many improvements to the Winsock API shipping in Vista is the new WSAPoll function. Its primary purpose is to simplify the porting of a sockets application that currently uses poll() by providing an identical facility in Winsock for managing groups of sockets.

Great! Starting September 2006 curl started using it (shipped in the release curl and libcurl 7.16.0). It seemed like a huge step forward, and as Chad wrote:

If you have experience developing applications using poll(), WSAPoll will be very familiar. It is designed to behave just like poll().

Emphasis added by me. It was (of course) made to work like poll, and that’s why the API is made like that. Why would you introduce something that is almost like poll() except in minor details?

Since the new function only was available in Vista and later, it took a while until libcurl users in a more wider scale got to use it but over time Windows XP users are slowly shifting away and more and more libcurl Windows users therefore use the WSApoll based builds. Life seemed to be good. Some users noticed funny things and reported bugs we couldn’t repeat (on other platforms) but nothing really stood out and no big alarm bells went off.

During July 2012, a user of libcurl on Windows, Jan Koen Annot experienced such problems and he didn’t just sigh and move on. He rolled up his sleeves and decided to get to the bottom. Perhaps he could fix a bug or two while at it? (It seems reasonable that he thought so, I haven’t actually asked him!) What he found was however not a bug in libcurl. He found out that WSApoll did indeed not work like poll (his initial post to curl-library on the problem)! On August 1st he submitted a support issue to Microsoft about it. On August 7 we pushed the commit to curl that removed our use of WSApoll.

A few days go Jan reported back on how the case has gone, where his journey down the support alleys took him.

It turns out Microsoft already knew about this bug, which they apparently have named “Windows 8 Bugs 309411 – WSAPoll does not report failed connections”. The ticket has been resolved as Won’t Fix… (I haven’t found any public access of this.)

Jan argued for the case that since WSApoll is designed and used as a plain poll() replacement it would make sense to actually make it also work the same way:

First, it will cost much time to find out that some ‘real-life’ issue can be traced back to this WSAPoll bug. In my case we were lucky to have a regression test which triggered when we started using a slightly different cURL-library configuration on Windows. Tracing back that the test was triggered because of this bug in WSAPoll took several hours. Imagine what it would cost, if some customer in the field reported annoying delays, to trace such a vague complaint back to a bug in the WSAPoll function!

Second, even if we know beforehand about this bug in WSAPoll, then it is difficult to determine in which situations in your code you can safely use WSAPoll and in which situations you might suffer from this bug. So a better recommendation would be to simply not use WSAPoll. (…)

Third, porting code which uses the poll() function to the Windows sockets API is made more complex. The introduction of WSAPoll was meant specifically for this, so it should have compatible behavior, without a recommendation to not use it in certain circumstances.

Fourth, your recommendation will only have effect when actively promoted to developers using WSAPoll. A much better approach would be to repair the bug and publish an update. Microsoft has some nice mechanisms in place for that.

So, my conclusion is that, even if in our case the business impact may be low because we found the bug in an early stage, it is still important that Microsoft fixes the bug and publishes an update.

In my eyes all very good and sensible arguments. Perhaps not too surprisingly, these fine reasons didn’t have any particular impact on how Microsoft views this old and known bug that “has been like this forever and people are already used to it.”. It will remain closed, and Microsoft motivated this decision to Jan quite clearly and with arguments one can understand:

A discussion has been conducted around this topic and the taken decision was not to have the fix implemented due to the following reasons:

- This issue since Vista

- no other Microsoft customer has asked for a Hotfix since Vista timeframe

- fixing this old issue might have some application compatibility risk (for those customers who might have somehow taken a dependency on WSAPoll failing with a timeout in the cases of connect failure as opposed to POLLERR).

- This API will become more irrelevant as the Windows versions increase; the networking APIs will move away from classic select/poll to more advanced I/O completion mechanisms.

Argument one and two are really weak and silly. Microsoft users are very rarely complaining to Microsoft and most wouldn’t even know how to do it. Also, this problem may certainly still affect many users even if nobody has asked for a fix.

The compatibility risk is a valid point, but that’s a bit of a hard argument to have. All bugs that are about behavior will of course risk that users have adapted to the wrong behavior so a bug fix may break those. All of us who write and maintain stable APIs are used to this problem, but sticking to the buggy way of working because it has been doing this for so long is in my eyes only correct if you document this with very large letters and emphasis in all documentation: WSApoll is not fully emulating poll – beware!

The fact that they will focus more on other APIs is also understandable but besides the point. We want reliable APIs that work as documented. Applications that are Windows-only probably already very rarely use WSApoll, it will probably remain being more important for porting socket style programs to Windows.

Jan also especially highlights a funny line from this Microsoft person:

The best way to add pressure for a hotfix to be released would be to have the customers reporting it again on http://connect.microsoft.com.

Okay, so even if they have motives why they won’t fix this bug they seem to hint that if more customers nag them about it they might change their minds. Fair enough. But the users of libcurl who for five years perhaps experienced funny effects are extremely unlikely to ever report and complain to Microsoft about this. They are way more likely to complain to us, or possibly to just work around the issue somehow.

Of course, users of WSApoll can adapt to the differences and make conditional code that handles them and that could be what we end up with in the curl project in the future if we just get volunteers to adapt the code accordingly. In the mean time we’ve just reverted to the old select()-using code instead, since select() does in fact mimic the “real” select much better…

[*] = clearly Mac OS X is not a sensible system since its poll() implementation is even worse than Windows and is mostly broken or just unreliable. Subject for another blog post another time.

Update

In 2023, a user made me aware that the Microsoft documentation now says:

Note As of Windows 10 version 2004, when a TCP socket fails to connect, (POLLHUP | POLLERR | POLLWRNORM) is indicated.

Maybe it is time to do new tests.