Joel Åsblom works as a “technical writer” at the Swedish “IT magazine” consortium IDG. He got assigned the job of interviewing Richard M Stallman when he was still in Stockholm after his talk at the foss-sthlm event. I had been mailing with another IDG guy (Sverker Brundin) on and off for weeks before this day to try to coordinate a time and place for this interview.

During this time, I forwarded the “usual” requests from RMS himself about how the writer should read up on the facts, the background and history behind Free Software, the GNU project and more. The recommended reading includes a lot of good info. My contact assured me that they knew this stuff and that they had interviewed mr Stallman before.

This November day after the talk done in Stockholm, Roger Sinel had volunteered to drive Richard around with his car to show him around the city and therefore he was also present in the IDG offices when Joel interviewed RMS. Roger recorded the entire interview on his phone. I’ve listened to the complete interview. You can do it as well: Part one as mp3 and ogg, and part 2 as mp3 and ogg. Roughly an hour playback time all together.

The day after the interview, Joel posted a blog entry on the computersweden.se blog (in Swedish) which not only showed disrespect towards his interviewee, but also proved that Joel has not understood very many words of Stallman’s view or perhaps he misread them on purpose. Joel’s blog post translated to English:

Yesterday I got an exclusive interview with legend Richard Stallman, who in the mid 80’s, published his GNU Manifesto on thoughts of a free operating system that would be compatible with Unix. Since then he has traveled the world with his insistent message that it is a crime against humanity to charge for the program.

As the choleric personality he is, I got the interview once I’ve made a sacred promise to never (at least in this interview) write only Linux but also add Gnu before each reference to this operating system. He thinks that his beloved GNU (a recursive acronym for GNU is Not Unix) is the basis of Linux in 1991 and thus should be mentioned in the same breath.

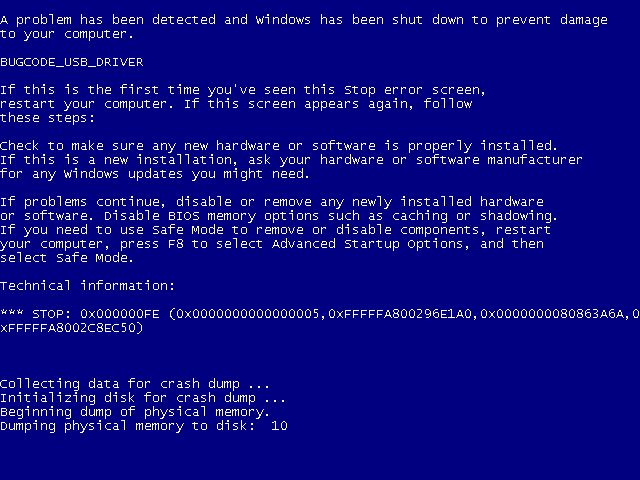

Another strange thing is that this man who KTH and a whole lot of other colleges have appointed an honorary doctorate has such a difficulty to understand the realities of the labor market. During the interview, I take notes on a computer running Windows, which makes him get really upset. He would certainly never condescend to work in an office where he could not run a computer that contains nothing but free software. I try to explain to him that the vast majority of office slaves depend on quite a few programs that are linked to mission-critical systems that are only available for Windows. No, Stallman insists that we must dare to stand up for our rights and not let ourselves be guided by others.

Again and again he returns to the subject that software licensing is a crime against humanity and completely ignores the argument that someone who has done a great job on designing programs also should be able to live from this.

The question then is whether the man is drugged. Yes, I actually asked if he (as suggested in some places) uses marijuana. This is because he has propagated for the drug to be allowed to get used in war veteran wellness programs. The answer is that he certainly think that cannabis should be legalized, but that he has stopped using the drug.

He confuses freedom with price – RMS never refuses anyone the right to charge for programs. Joel belittles the importance of GNU in a modern Linux system. He calls him “choleric”. He claims you cannot earn money on Free Software (maybe he needs to talk to some of the Linux kernel hackers) and he seems to think that Windows is crucial to office workers. Software licenses a crime against humanity? From the person who has authored several very widely used software licenses?

The final part about the drugs is just plain rude.

During the interview, Joel mentions several times that he is using Ubuntu at home (and Stallman explains that it is one of the non-free GNU/Linux systems). It is an excellent proof that just because someone is using a Linux-based OS, they don’t have to know one iota or care the slightest about some of the values and ethics that lie behind its creation.

In the end it leaves you wondering if Joel wrote this crap deliberately or just out of ignorance. It is hard to see that you actually can miss the point to this extent. It is just another proof what kind of business IDG is.

The reaction

Ok, so I felt betrayed and badly treated by IDG as I had helped them get this interview. I emailed Sverker and Joel with my complaints and I pointed out the range of errors and faults in this “blogpost”. I know others did too, and RMS himself of course wasn’t too thrilled with seeing yet another article with someone completely missing the point and putting words into his mouth that he never said and that he doesn’t stand for.

During the weekend I discussed this at FSCONS with friends and there were a lot of head-shakes, sighs and rolling eyes.

The two writers both responded to my harsh criticisms and brushed it off, claiming you can have different views on free vs gratis and so on, and both said something in the style “but wait for the real article”. Ok, so I held off this blog post until the “real article”.

The real article

Stallman – geni och kolerisk agitator, which then is supposedly the real article, was posted on November 15th. It basically changed nothing at all. The same flaws are there – none of the complaint mails and friendly efforts to help them straighten out the facts had any effect. I would say the most fundamental flaws ones are:

With opinions that it is a crime against humanity to charge for software Richard Stallman has made many enemies at home. In South America, he has more friends, some of which are presidents whom he persuaded to join the road to free source code.

Joel claims RMS says you can’t charge for software. The truth is that he repeatedly and with emphasis says that free software means free as in freedom, it does not necessarily means gratis. Listen to the interview, he said this clearly this time as well. And he says so every time he does a public talk.

Richard Stallman is also the founder of the Free Software Foundation, and his big show-piece is the fight against everything regarding software licenses.

Joel claims he has a “fight against everything regarding software licenses”. That’s so stupid I don’t know where to begin. The article itself even has a little box next to it describing how RMS wrote the GPL license etc. RMS is behind some of the most used software licenses in the world.

The fact that Joel tries to infer that Free Software is mostly a deal in South America is just a proof that this magazine (and writer) has no idea about for example the impact of Linux and GNU/Linux in just about all software areas except desktops.

My advice

All this serves just as a proof and a warning: please friends, approach this behemoth known as IDG with utmost care and be sure that they will not understand what you’re talking about if you’re not into their mainstream territory. They deliberately will write crap about you, even after having been told about errors and mistakes. Out of spite or just plain stupidity, I’m not sure.

[I deliberately chose not to include the full article translated to English here since it is mostly repetition.]

I’ve been an open source and free software hacker, contributor and maintainer for almost 20 years. I’m the perfect stereo-type too: a white, hetero, 40+ years old male living in a suburb of a west European city. (I just lack a beard.) I’ve done more than 20,000 commits in public open source code repositories. In the projects I maintain, and have a leading role in, and for the sake of this argument I’ll limit the discussion to

I’ve been an open source and free software hacker, contributor and maintainer for almost 20 years. I’m the perfect stereo-type too: a white, hetero, 40+ years old male living in a suburb of a west European city. (I just lack a beard.) I’ve done more than 20,000 commits in public open source code repositories. In the projects I maintain, and have a leading role in, and for the sake of this argument I’ll limit the discussion to