The HTTP  workshop series is back for a third time this northern hemisphere summer. The selected location for the 2017 version is London and this time we’re down to a two-day event (we seem to remove a day every year)…

workshop series is back for a third time this northern hemisphere summer. The selected location for the 2017 version is London and this time we’re down to a two-day event (we seem to remove a day every year)…

Nothing in this blog entry is a quote to be attributed to a specific individual but they are my interpretations and paraphrasing of things said or presented. Any mistakes or errors are all mine.

At 9:30 this clear Monday morning, 35 persons sat down around a huge table in a room in the Facebook offices. Most of us are the same familiar faces that have already participated in one or two HTTP workshops, but we also have a set of people this year who haven’t attended before. Getting fresh blood into these discussions is certainly valuable. Most major players are represented, including Mozilla, Google, Facebook, Apple, Cloudflare, Fastly, Akamai, HA-proxy, Squid, Varnish, BBC, Adobe and curl!

Mark (independent, co-chair of the HTTP working group as well as the QUIC working group) kicked it all off with a presentation on quic and where it is right now in terms of standardization and progress. The upcoming draft-04 is becoming the first implementation draft even though the goal for interop is set basically at handshake and some very basic data interaction. The quic transport protocol is still in a huge flux and things have not settled enough for it to be interoperable right now to a very high level.

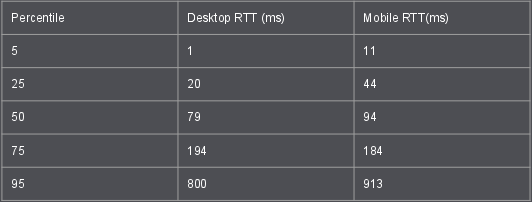

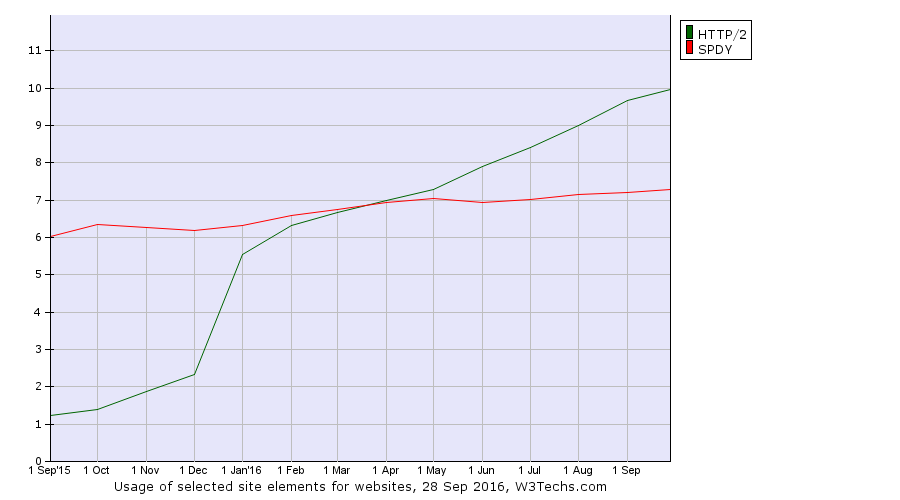

Jana from Google presented on quic deployment over time and how it right now uses about 7% of internet traffic. The Android Youtube app’s switch to QUIC last year showed a huge bump in usage numbers. Quic is a lot about reducing latency and numbers show that users really do get a reduction. By that nature, it improves the situation best for those who currently have the worst connections.

It doesn’t solve first world problems, this solves third world connection issues.

The currently observed 2x CPU usage increase for QUIC connections as compared to h2+TLS is mostly blamed on the Linux kernel which apparently is not at all up for this job as good is should be. Things have clearly been more optimized for TCP over the years, leaving room for improvement in the UDP areas going forward. “Making kernel bypassing an interesting choice”.

observed 2x CPU usage increase for QUIC connections as compared to h2+TLS is mostly blamed on the Linux kernel which apparently is not at all up for this job as good is should be. Things have clearly been more optimized for TCP over the years, leaving room for improvement in the UDP areas going forward. “Making kernel bypassing an interesting choice”.

Alan from Facebook talked header compression for quic and presented data, graphs and numbers on how HPACK(-for-quic), QPACK and QCRAM compare when used for quic in different networking conditions and scenarios. Those are the three current header compression alternatives that are open for quic and Alan first explained the basics behind them and then how they compare when run in his simulator. The current HPACK version (adopted to quic) seems to be out of the question for head-of-line-blocking reasons, the QCRAM suggestion seems to run well but have two main flaws as it requires an awkward layering violation and an annoying possible reframing requirement on resends. Clearly some more experiments can be done, possible with a hybrid where some QCRAM ideas are brought into QPACK. Alan hopes to get his simulator open sourced in the coming months which then will allow more people to experiment and reproduce his numbers.

Hooman from Fastly on problems and challenges with HTTP/2 server push, the 103 early hints HTTP response and cache digests. This took the discussions on push into the weeds and into the dark protocol corners we’ve been in before and all sorts of ideas and suggestions were brought up. Some of them have been discussed before without having been resolved yet and some ideas were new, at least to me. The general consensus seems to be that push is fairly complicated and there are a lot of corner cases and murky areas that haven’t been clearly documented, but it is a feature that is now being used and for the CDN use case it can help with a lot more than “just an RTT”. But is perhaps the 103 response good enough for most of the cases?

The discussion on server push and how well it fares is something the QUIC working group is interested in, since the question was asked already this morning if a first version of quic could be considered to be made without push support. The jury is still out on that I think.

ekr from Mozilla spoke about TLS 1.3, 0-RTT, how the TLS 1.3 handshake looks like and how applications and servers can take advantage of the new 0-RTT and “0.5-RTT” features. TLS 1.3 is already passed the WGLC and there are now “only” a few issues pending to get solved. Taking advantage of 0RTT in an HTTP world opens up interesting questions and issues as HTTP request resends and retries are becoming increasingly prevalent.

Next: day two.

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer

At 5pm we rounded off another fully featured day at the

At 5pm we rounded off another fully featured day at the