The third day of the QUIC interim passed and now that meeting has ended. It continued to work very well to attend from remote and the group manged to plow through an extensive set of issues. A lot of consensus was achieved and I personally now have a much better feel for the protocol and many of its details thanks to the many discussions.

The drafts are still a bit too early for us to start discussing inter-op for real. But there were mentions and hopes expressed that maybe maybe we might start to see some of that by mid 2017. When we did HTTP/2, we had about 10 different implementations by the time draft-04 was out. I suspect we will see a smaller set for QUIC simply because of it being much more complex.

The next interim is planned to occur in the beginning of June in Europe.

There is an official QUIC logo being designed, but it is not done yet so you still need to imagine one placed here.

QUIC needs HTTP/2 needs HTTP/1

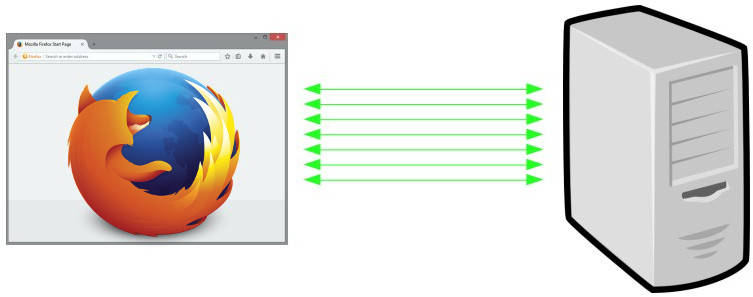

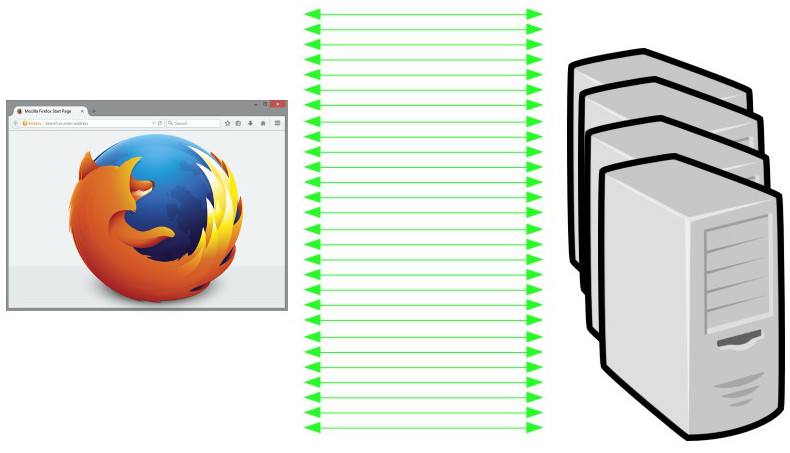

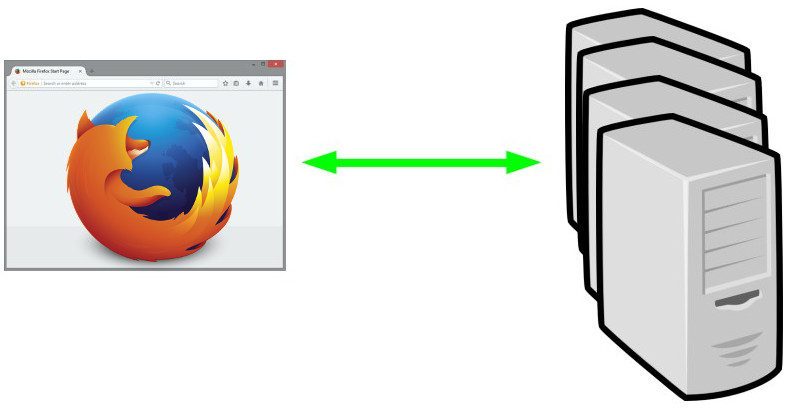

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer HTTP/2 frames. You remember how HTTP/2 is “just a new framing” layer that changes how HTTP is sent over the wire, but when “decoded” again in the receiving end it is in most important aspects still HTTP/1 there. You have to implement most of a HTTP/1 stack in order to support HTTP/2. Now QUIC adds another layer to that. QUIC is a new way to send HTTP/2 frames over the network.

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer HTTP/2 frames. You remember how HTTP/2 is “just a new framing” layer that changes how HTTP is sent over the wire, but when “decoded” again in the receiving end it is in most important aspects still HTTP/1 there. You have to implement most of a HTTP/1 stack in order to support HTTP/2. Now QUIC adds another layer to that. QUIC is a new way to send HTTP/2 frames over the network.

A QUIC stack needs to handle most aspects of HTTP/2!

Of course, there are notable differences and changes to some underlying principles that makes QUIC a bit different. It isn’t exactly HTTP/2 over secure UDP. Let me give you a few examples…

Streams are more independent

Packets sent over the wire with UDP are independent from each other to a very large degree. In order to avoid Head-of-Line blocking (HoL), packets that are lost and re-transmitted will only block the particular streams to which the lost packets belong. The other streams can keep flowing, unaware and uncaring.

Thanks to the nature of the Internet and how packets are handled, it is not unusual for network packets to arrive in a slightly different order than they were sent, even when they aren’t exactly “lost”.

So, streams in HTTP/2 were entirely synced and the order the sender of frames use, will be the exact same order in which the frames arrive in the other end. Packet loss or not.

In QUIC, individual frames and entire streams may arrive in the receiver in a different order than what was used in the sender.

Stream ID gaps means open

When receiving a QUIC packet, there’s basically no way to know if there are packets missing that were intended to arrive but got lost and haven’t yet been re-transmitted.

If a frame is received that uses the new stream ID N (a stream not previously seen), the receiver is then forced to assume that all the other streams ID from our previously highest ID to N are all just missing and will arrive soon. They are then presumed to exist!

In HTTP/2, we could handle gaps in stream IDs much differently because of TCP. Then a gap is known to be deliberate.

Some h2 frames are done by QUIC

Since QUIC is designed with streams, flow control and more and is used to send HTTP/2 frames over them, some of the h2 frames aren’t needed but are instead handled by the transport layer within QUIC and won’t show up in the HTTP/2 layer.

HPACK goes QPACK?

HPACK is the header compression system used in HTTP/2. Among other things it features a dictionary that you manipulate with instructions and then subsequent header frames can refer to those dictionary indexes instead of sending the full header. Header frame one says “insert my user-agent string” and then header frame two can refer back to the index in the dictionary for where that identical user-agent string is stored.

Due to the out of order streams in QUIC, this dictionary treatment is harder. The second header frame could arrive before the first, so if it would refer to an index set in the first header frame, it would have to block the entire stream until that first header arrives.

HPACK also has a concept of just adding things to the dictionary without specifying the index, and since both sides are in perfect sync it works just fine. In QUIC, if we want to maintain the independence of streams and avoid blocking to the highest degree, we need to instead specify exact indexes to use and not assume perfect sync.

This (and more) are reasons why QPACK is being suggested as a replacement for HPACK when HTTP/2 header frames are sent over QUIC.

The companies with most attendees present here were: Mozilla 5, Google 4, Facebook, Akamai and Apple 3.

The companies with most attendees present here were: Mozilla 5, Google 4, Facebook, Akamai and Apple 3.

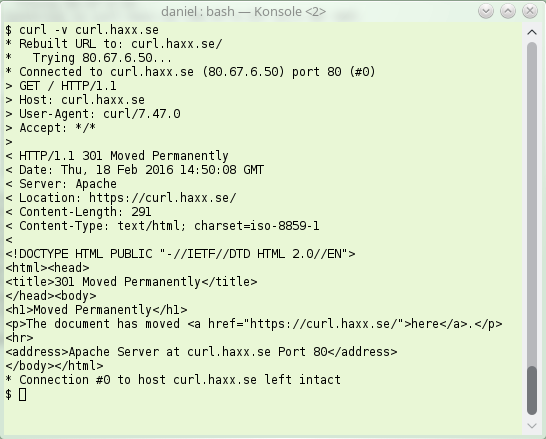

erver sending back an instruction to the client – instead of giving back the contents the client wanted. The server basically says “go look over [here] instead for that thing you asked for“.

erver sending back an instruction to the client – instead of giving back the contents the client wanted. The server basically says “go look over [here] instead for that thing you asked for“.