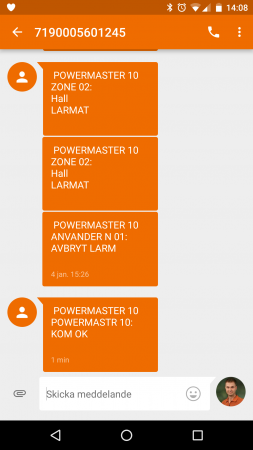

My phone just lighted up. POWERMASTER 10 told me something. It said “POWERMASTR 10: KOM OK”.

Over the last few months, I’ve received almost 30 weird text messages from a “POWERMASTER 10”, originating from a Swedish phone number in a number range reserved for “devices”. Yeps, I’m showing the actual number below in the screenshot because I think it doesn’t matter and if for the unlikely event that the owner of +467190005601245 would see this, he/she might want to change his/her alarm config.

Powermaster 10 is probably a house alarm control panel made by Visonic. It is also clearly localized and sends messages in Swedish.

As this habit has been going on for months already, one can only suspect that the user hasn’t really found the SMS feedback to be a really valuable feature. It also makes me wonder what the feedback it sends really means.

The upside of this story is that you seem to be a very happy person when you have one of these control panels, as this picture from their booklet shows. Alarm systems, control panels, text messages. Why wouldn’t you laugh?!

Edit: I contacted Telenor about this after my initial blog post but they simply refused to do anything since I’m not the customer and they just didn’t want to understand that I only wanted them to tell their customer that they’re doing something wrong. These messages kept on coming to me with irregular intervals until July 2018.

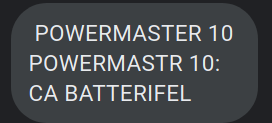

Update, September 8, 2020: I got another text today (it’s been silent since September 26, 2019). The Swedish text in this message translates to “battery error”.

August 15 2021. Still happening.

Click the image to view it slightly larger.

Click the image to view it slightly larger.