In this endless stream of frequent releases, the next release isn’t terribly different from the previous.

curl’s 167th release is called 7.55.0 and while the name or number isn’t standing out in any particular way, I believe this release has a few extra bells and whistles that makes it stand out a little from the regular curl releases, feature wise. Hopefully this will turn out to be a release that becomes the new “you should at least upgrade to this version” in the coming months and years.

Here are six things in this release I consider worthy some special attention. (The full changelog.)

1. Headers from file

The command line options that allows users to pass on custom headers can now read a set of headers from a given file.

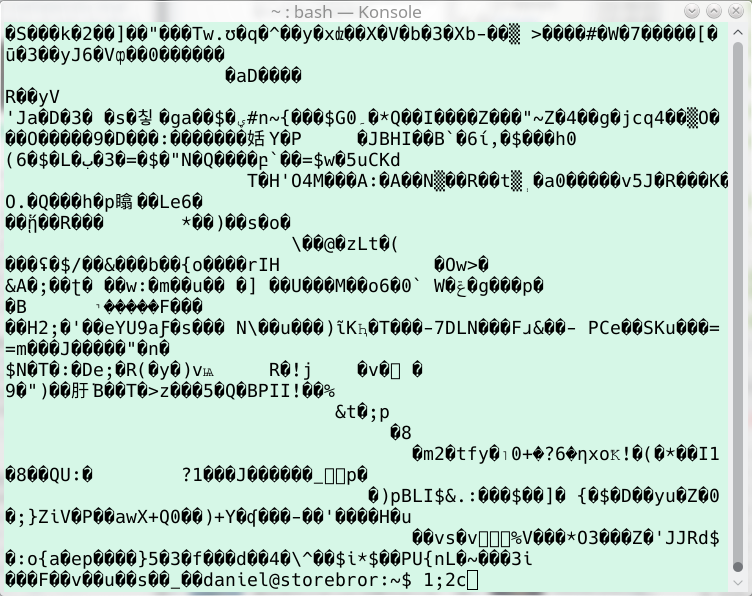

2. Binary output prevention

Invoke curl on the command line, give it a URL to a binary file and see it destroy your terminal by sending all that gunk to the terminal? No more.

3. Target independent headers

You want to build applications that use libcurl and build for different architectures, such as 32 bit and 64 bit builds, using the same installed set of libcurl headers? Didn’t use to be possible. Now it is.

4. OPTIONS * support!

Among HTTP requests, this is a rare beast. Starting now, you can tell curl to send such requests.

5. HTTP proxy use cleanup

Asking curl to use a HTTP proxy while doing a non-HTTP protocol would often behave in unpredictable ways since it wouldn’t do CONNECT requests unless you added an extra instruction. Now libcurl will assume CONNECT operations for all protocols over an HTTP proxy unless you use HTTP or FTP.

6. Coverage counter

The configure script now supports the option –enable-code-coverage. We now build all commits done on github with it enabled, run a bunch of tests and measure the test coverage data it produces. How large share of our source code that is exercised by our tests. We push all coverage data to coveralls.io.

That’s a blunt tool, but it could help us identify parts of the project that we don’t test well enough. Right now it says we have a 75% coverage. While not totally bad, it’s not very impressive either.

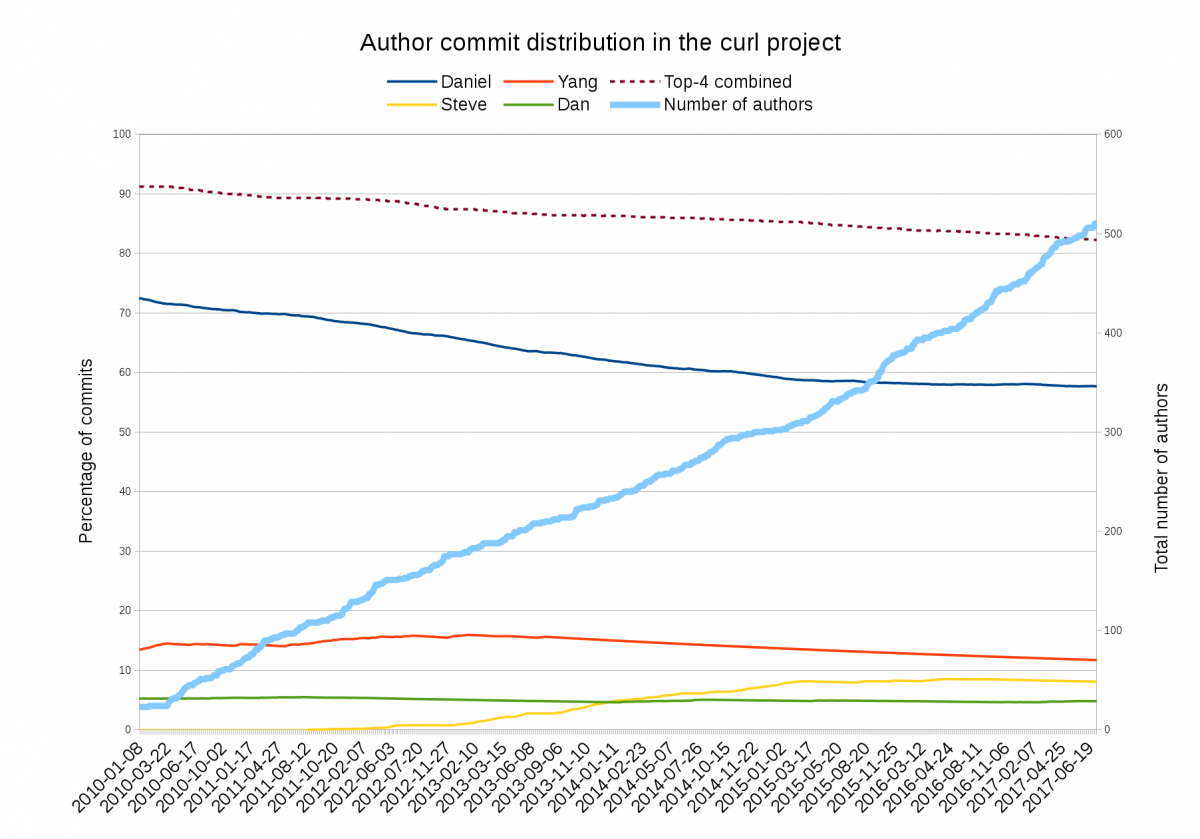

Stats

This release ships 56 days since the previous one. Exactly 8 weeks, right on schedule. 207 commits.

This release contains 114 listed bug-fixes, including three security advisories. We list 7 “changes” done (new features basically).

We got help from 41 individual contributors who helped making this single release. Out of this bunch, 20 persons were new contributors and 24 authored patches.

283 files in the git repository were modified for this release. 51 files in the documentation tree were updated, and in the library 78 files were changed: 1032 lines inserted and 1007 lines deleted. 24 test cases were added or modified.

The top 5 commit authors in this release are: