Today we celebrate the fact that it is exactly 17 years since the first public release of curl. I have always been the lead developer and maintainer of the project.

When I released that first version in the spring of 1998, we had only a handful of users and a handful of contributors. curl was just a little tool and we were still a few years out before libcurl would become a thing of its own.

The tool we had been working on for a while was still called urlget in the beginning of 1998 but as we just recently added FTP upload capabilities that name turned wrong and I decided cURL would be more suitable. I picked ‘cURL’ because the word contains URL and already then the tool worked primarily with URLs, and I thought that it was fun to partly make it a real English word “curl” but also that you could pronounce it “see URL” as the tool would display the contents of a URL.

Much later, someone (I forget who) came up with the “backronym” Curl URL Request Library which of course is totally awesome.

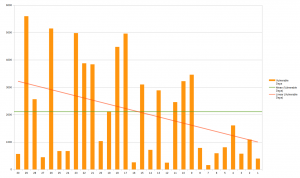

17 years are 6209 days. During this time we’ve done more than 150 public releases containing more than 2600 bug fixes!

We started out GPL licensed, switched to MPL and then landed in MIT. We started out using RCS for version control, switched to CVS and then git. But it has stayed written in good old C the entire time.

The term “Open Source” was coined 1998 when the Open Source Initiative was started just the month before curl was born, which was superseded with just a few days by the announcement from Netscape that they would free their browser code and make an open browser.

We’ve hosted parts of our project on servers run by the various companies I’ve worked for and we’ve been on and off various free services. Things come and go. Virtually nothing stays the same so we better just move with the rest of the world. These days we’re on github a lot. Who knows how long that will last…

We have grown to support a ridiculous amount of protocols and curl can be built to run on virtually every modern operating system and CPU architecture.

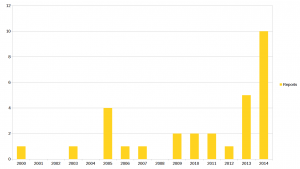

The list of helpful souls who have contributed to make curl into what it is now have grown at a steady pace all through the years and it now holds more than 1200 names.

Employments

In 1998, I was employed by a company named Frontec Tekniksystem. I would later leave that company and today there’s nothing left in Sweden using that name as it was sold and most employees later fled away to other places. After Frontec I joined Contactor for many years until I started working for my own company, Haxx (which we started on the side many years before that), during 2009. Today, I am employed by my forth company during curl’s life time: Mozilla. All through this project’s lifetime, I’ve kept my work situation separate and I believe I haven’t allowed it to disturb our project too much. Mozilla is however the first one that actually allows me to spend a part of my time on curl and still get paid for it!

The Netscape announcement which was made 2 months before curl was born later became Mozilla and the Firefox browser. Where I work now…

Future

I’m not one of those who spend time glazing toward the horizon dreaming of future grandness and making up plans on how to go there. I work on stuff right now to work tomorrow. I have no idea what we’ll do and work on a year from now. I know a bunch of things I want to work on next, but I’m not sure I’ll ever get to them or whether they will actually ship or if they perhaps will be replaced by other things in that list before I get to them.

The world, the Internet and transfers are all constantly changing and we’re adapting. No long-term dreams other than sticking to the very simple and single plan: we do file-oriented internet transfers using application layer protocols.

Rough estimates say we may have a billion users already. Chances are, if things don’t change too drastically without us being able to keep up, that we will have even more in the future.

It has to feel good, right?

I will of course point out that I did not take curl to this point on my own, but that aside the ego-boost this level of success brings is beyond imagination. Thinking about that my code has ended up in so many places, and is driving so many little pieces of modern network technology is truly mind-boggling. When I specifically sit down or get a reason to think about it at least.

Most of the days however, I tear my hair when fixing bugs, or I try to rephrase my emails to no sound old and bitter (even though I can very well be that) when I once again try to explain things to users who can be extremely unfriendly and whining. I spend late evenings on curl when my wife and kids are asleep. I escape my family and rob them of my company to improve curl even on weekends and vacations. Alone in the dark (mostly) with my text editor and debugger.

There’s no glory and there’s no eternal bright light shining down on me. I have not climbed up onto a level where I have a special status. I’m still the same old me, hacking away on code for the project I like and that I want to be as good as possible. Obviously I love working on curl so much I’ve been doing it for over seventeen years already and I don’t plan on stopping.

Celebrations!

Yeps. I’ll get myself an extra drink tonight and I hope you’ll join me. But only one, we’ll get back to work again afterward. There are bugs to fix, tests to write and features to add. Join in the fun! My backlog is only growing…

As Firefox OS uses a Linux kernel, I ended up doing the same fix for both the Firefox OS devices as for Firefox on Linux desktop: I open a socket in the

As Firefox OS uses a Linux kernel, I ended up doing the same fix for both the Firefox OS devices as for Firefox on Linux desktop: I open a socket in the