I founded the curl project early 1998 but had already then been working on the code since November 1996. The source code was always open, free and available to the world. The term “open source” actually wasn’t even coined until early 1998, just weeks before curl was born.

In the beginning of course, the first few years or so, this project wasn’t seen or discovered by many and just grew slowly and silently in a dusty corner of the Internet.

Already when I shipped the first versions I wanted the code to be open and freely available. For years I had seen the cool free software put out the in the world by others and I wanted my work to help build this communal treasure trove.

License

When I started this journey I didn’t really know what I wanted with curl’s license and exactly what rights and freedoms I wanted to give away and it took a few years and attempts before it landed.

The early versions were GPL licensed, but as I learned about resistance from proprietary companies and thought about it further, I changed the license to be more commercially friendly and to match my conviction better. I ended up with MIT after a brief experimental time using MPL. (It was easy to change the license back then because I owned all the copyrights at that point.)

To be exact: we actually have a slightly modified MIT license with some very subtle differences. The reason for the changes have been forgotten and we didn’t get those commits logged in the “big transition” to Sourceforge that we did in late 1999… The end result is that this is now often recognized as “the curl license”, even though it is in effect the MIT license.

The license says everyone can use the code for whatever purpose and nobody is required to ship any source code to anyone, but they cannot claim they wrote it themselves and the license/use of the code should be mentioned in documentation or another relevant location.

As licenses go, this has to be one of the most frictionless ones there is.

Copyright

Open source relies on a solid copyright law and the copyright owners of the code are the only ones who can license it away. For a long time I was the sole copyright owner in the project. But as I had decided to stick to the license, I saw no particular downsides with allowing code and contributors (of significant contributions) to retain their copyrights on the parts they brought. To not use that as a fence to make contributions harder.

Today, in early 2021, I count 1441 copyright strings in the curl source code git repository. 94.9% of them have my name.

I never liked how some projects require copyright assignments or license agreements etc to be able to submit code or patches. Partly because of the huge administrative burden it adds to the project, but also for the significant friction and barrier to entry they create for new contributors and the unbalance it creates; some get more rights than others. I’ve always worked on making it easy and smooth for newcomers to start contributing to curl. It doesn’t happen by accident.

Spare time

In many ways, running a spare time open source project is easy. You just need a steady income from a “real” job and sufficient spare time, and maybe a server to host stuff on for the online presence.

The challenge is of course to keep developing it, adding things people want, to help users with problems and to address issues timely. Especially if you happen to be lucky and the user amount increases and the project grows in popularity.

I ran curl as a spare time project for decades. Over the years it became more and more common that users who submitted bug reports or asked for help about things were actually doing that during their paid work hours because they used curl in a commercial surrounding – which sometimes made the situation almost absurd. The ones who actually got paid to work with curl were asking the unpaid developers to help them out.

I changed employers several times. I started my own company and worked as my own boss for a while. I worked for Mozilla on network stuff in Firefox for five years. But curl remained a spare time project because I couldn’t figure out how to turn it into a job without risking the project or my economy.

Earning a living

For many years it was a pipe dream for me to be able to work on curl as a real job. But how do I actually take the step from a spare time project to doing it full time? I give away all the code for free, and it is a solid and reliable product.

The initial seeds were planted when I met and got to know Larry (wolfSSL CEO) and some of the other good people at wolfSSL back in the early 2010s. This, because wolfSSL is a company that write open source libraries and offer commercial support for them – proving that it can work as a business model. Larry always told me he thought there was a possibility waiting here for me with curl.

Apart from the business angle, if I would be able to work more on curl it could really benefit the curl project, and then of course indirectly everyone who uses it.

It was still a step to take. When I gave up on Mozilla in 2018, it just took a little thinking before I decided to try it. I joined wolfSSL to work on curl full time. A dream came true and finally curl was not just something I did “on the side”. It only took 21 years from first curl release to reach that point…

I’m living the open source dream, working on the project I created myself.

Food for free code

We sell commercial support for curl and libcurl. Companies and users that need a helping hand or swift assistance with their problems can get it from us – and with me here I dare to claim that there’s no company anywhere else with the same ability. We can offload engineering teams with their curl issues. Up to 24/7 level!

We also offer custom curl development, debugging help, porting to new platforms and basically any other curl related activity you need. See more on the curl product page on the wolfSSL site.

curl (mostly in the shape of libcurl) runs in ten billion installations: some five, six billion mobile phones and tablets – used by several of the most downloaded apps in existence, in virtually every website and Internet server. In a billion computer games, a billion Windows machines, half a billion TVs, half a billion game consoles and in a few hundred million cars… curl has been made to run on 82 operating systems on 22 CPU architectures. Very few software components can claim a wider use.

“Isn’t it easier to list companies that are not using curl?”

Wide use and being recognized does not bring food on the table. curl is also totally free to download, build and use. It is very solid and stable. It performs well, is documented, well tested and “battle hardened”. It “just works” for most users.

Pay for support!

How to convince companies that they should get a curl support contract with me?

Paying customers get to influence what I work on next. Not only distant road-mapping but also how to prioritize short term bug-fixes etc. We have a guaranteed response-time.

You get your issues first in line to get fixed. Customers also won’t risk getting their issues added the known bugs document and put in the attic to be forgotten. We can help customers make sure their application use libcurl correctly and in the best possible way.

I try to emphasize that by getting support from us, customers can take away some of those tasks from their own engineers and because we are faster and better on curl related issues, that is a pure net gain economically. For all of us.

This is not an easy sell.

Sure, curl is used by thousands of companies everywhere, but most of them do it because it’s free (in all meanings of the word), functional and available. There’s a real challenge in identifying those that actually use it enough and value the functionality enough that they realize they want to improve their curl foo.

Most of our curl customers purchased support first when they faced a complicated issue or problem they couldn’t fix themselves – this fact gives me this weird (to the wider curl community) incentive to not fix some problems too fast, because it then makes it work against my ability to gain new customers!

We need paying customers for this to be sustainable. When wolfSSL has a sustainable curl business, I get paid and the work I do in curl benefits all the curl users; paying as well as non-paying.

Dual license

There’s clearly business in releasing open source under a strong copyleft license such as GPL, and as long as you keep the copyrights, offer customers to purchase that same code under another more proprietary- friendly license. The code is still open source and anyone doing totally open things can still use it freely and at no cost.

We’ve shipped tiny-curl to the world licensed under GPLv3. Tiny-curl is a curl branch with a strong focus on the tiny part: the idea is to provide a libcurl more suitable for smaller systems, the ones that can’t even run a full Linux but rather use an RTOS.

Consider it a sort of experiment. Are users interested in getting a smaller curl onto their products and are they interested in paying for licensing. So far, tiny-curl supports two separate RTOSes for which we haven’t ported the “normal” curl to.

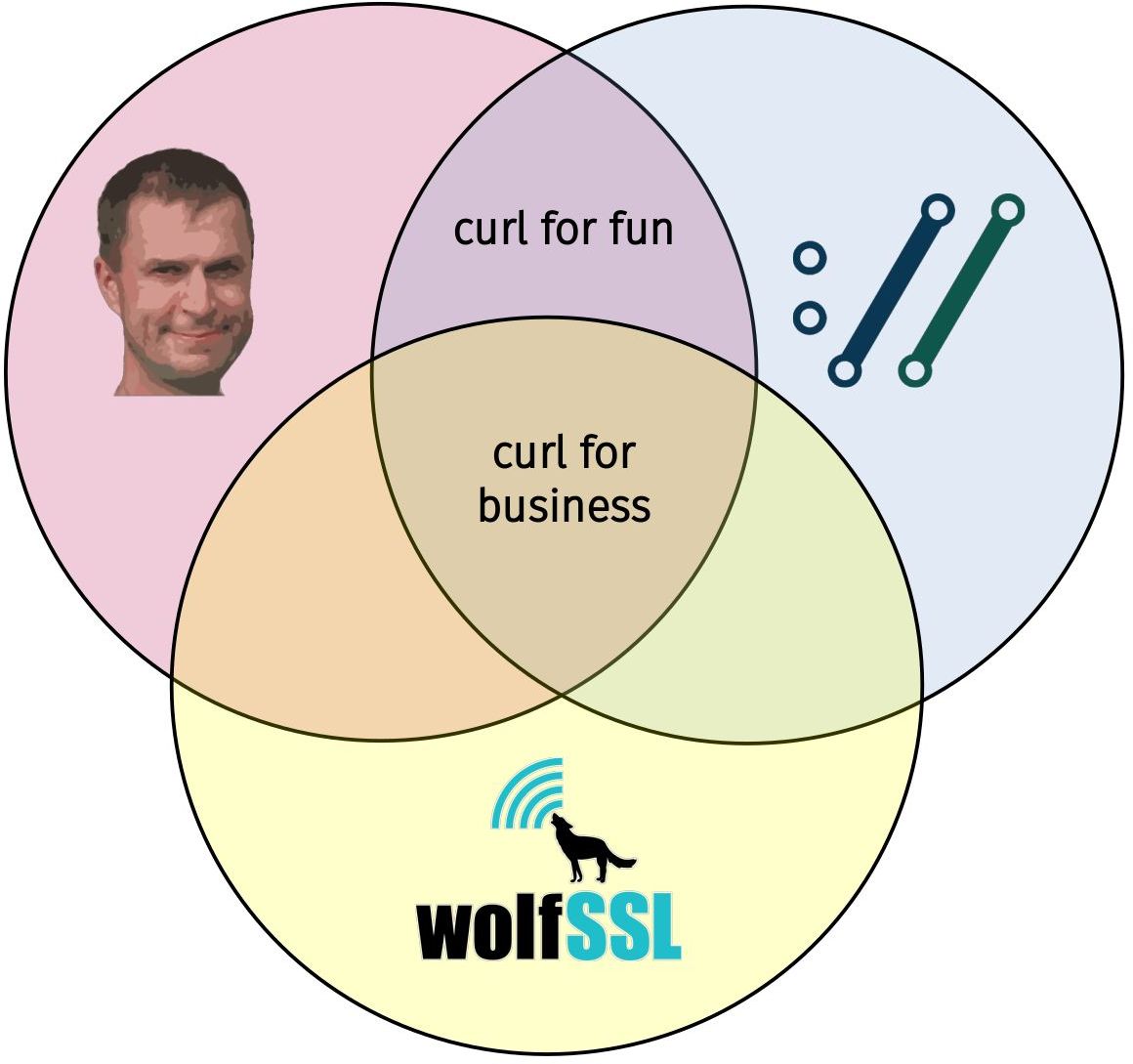

Keeping things separate

Maybe you don’t realize this, but I work hard to keep separate things compartmentalized. I am not curl, curl is not wolfSSL and wolfSSL is not me. But we all overlap greatly!

I work for wolfSSL. I work on curl. wolfSSL offers commercial curl support.

Reserved features

One idea that we haven’t explored much yet is the ability to make and offer “reserved features” to paying customers only. This of course as another motivation for companies to become curl support customers.

Such reserved features would still have to be sensible for the curl project and most likely we would provide them as specials for paying customers for a period of time and then merge them into the “real” open source curl project. It is very important to note that this will not in any way make the “regular curl” worse or a lesser citizen in any way. It would rather be a like a separate product, a curl+ with extra stuff on top of vanilla curl.

Since we haven’t ventured into this area yet, we haven’t worked out all the details. Chances are we will wander into this territory soon.

Other food-generators

I do occasional speaking gigs on curl and HTTP related topics but even if I charge for them this activity never brings much more than some extra pocket money. I do it because it’s fun and educational.

It has been suggested that I should create a web shop to sell curl branded merchandise in, like t-shirts, mugs, etc but I think that grossly over-estimates the user interest and how much margin I could put on mundane things just because they’d have a curl logo glued on them. Also, I would have a difficult time mentally to sell curl things and claim the profit personally. I rather keep giving away curl stash (mostly stickers) for free as a means to market the project and long term encourage users into buying support.

Donations

We receive money to the curl project through donations, most of them via our opencollective account. It is important to note that even if I’m a key figure in the project, this is not my money and it’s not my project. Donated money is spent on project related expenses, which so far primarily is our bug bounty program. We’ve avoided to spend donated money on direct curl development, and especially such that I could provide or benefit from myself, as that would totally blur the boundaries. I’m not ruling out taking that route in a future though. As long as and only if it is to the project’s benefit.

Donations via GitHub to me personally sponsors me personally and ends up in my pockets. That’s not curl money but I spend it mostly on curl development, equipment etc and it makes me able to not have to think twice when sending curl stickers to fans and friends all over the world. It contributes to food on my table and I like to think that an occasional beer I drink is sponsored by friends out there!

The future I dream of

We get a steady number of companies paying for support at a level that allows us to also pay for a few more curl engineers than myself.

Credits

Image by Khusen Rustamov from Pixabay

I think the highest risk scenario is when users download pre-built curl or libcurl binaries from various places on the internet that isn’t the official curl web site. How can you know for sure what you’re getting then, as you couldn’t review the code or changes done. You just put your trust in a remote person or organization to do what’s right for you.

I think the highest risk scenario is when users download pre-built curl or libcurl binaries from various places on the internet that isn’t the official curl web site. How can you know for sure what you’re getting then, as you couldn’t review the code or changes done. You just put your trust in a remote person or organization to do what’s right for you. Some people argue that projects could or should pledge for every release that there’s no deliberate backdoor planted so that if the day comes in the future when a three-letter secret organization forces us to insert a backdoor, the lack of such a pledge for the subsequent release would function as an alarm signal to people that something is wrong.

Some people argue that projects could or should pledge for every release that there’s no deliberate backdoor planted so that if the day comes in the future when a three-letter secret organization forces us to insert a backdoor, the lack of such a pledge for the subsequent release would function as an alarm signal to people that something is wrong.