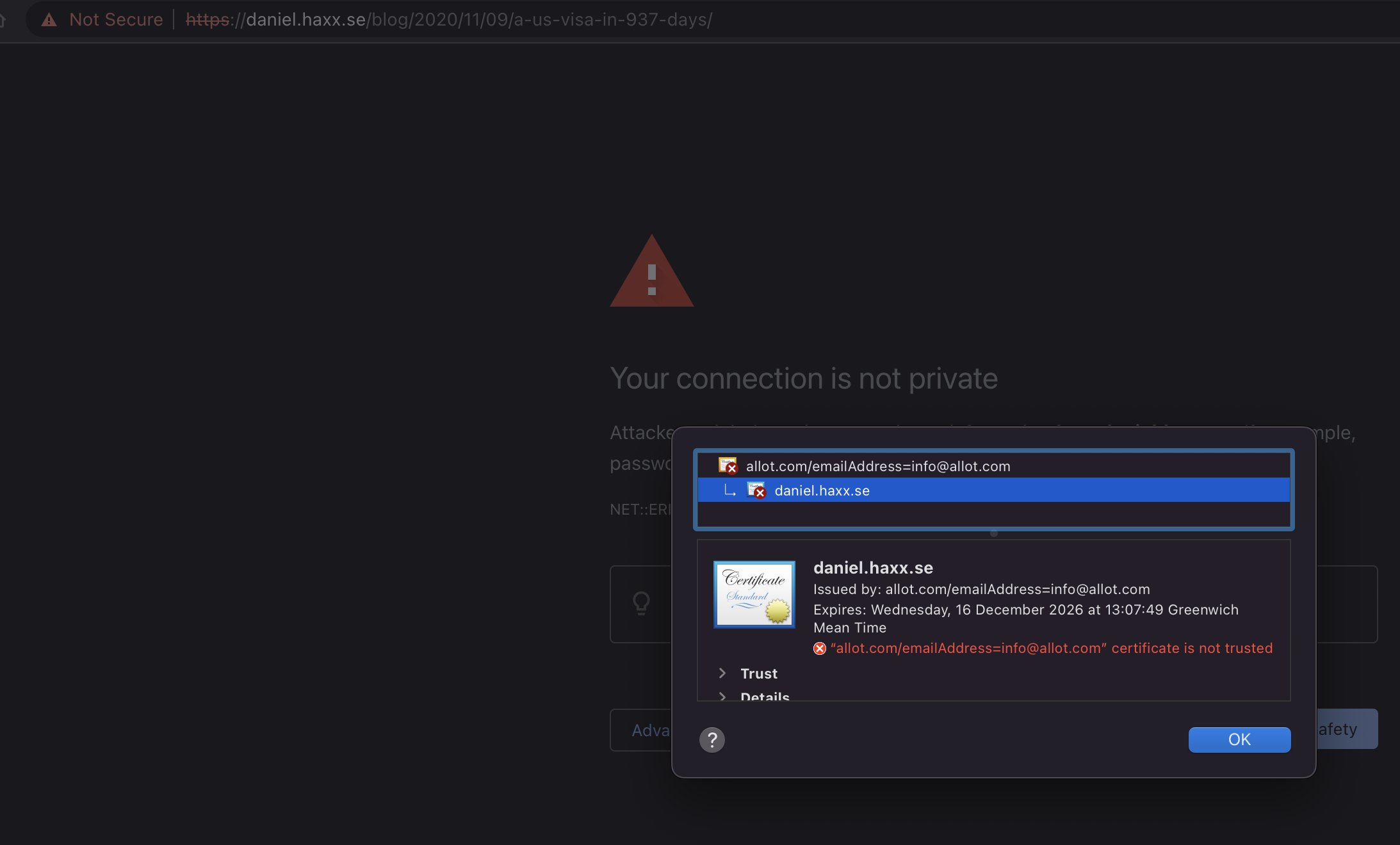

The .netrc file is used to hold user names and passwords for specific host names and allows tools to login to those systems automatically without having to prompt the user for the credentials while avoiding having to use them in command lines. The .netrc file is typically set without group or world read permissions (0600) to reduce the risk of leaking those secrets.

History

Allegedly, the .netrc file format was invented and first used for Berknet in 1978 and it has been used continuously since by various tools and libraries. Incidentally, this was the same year Intel introduced the 8086 and DNS didn’t exist yet.

.netrc has been supported by curl (since the summer of 1998), wget, fetchmail, and a busload of other tools and networking libraries for decades. In many cases it is the only cross-tool way to provide credentials to remote systems.

The .netrc file use is perhaps most widely known from the “standard” ftp command line client. I remember learning to use this file when I wanted to do automatic transfers without any user interaction using the ftp command line tool on unix systems in the early 1990s.

Example

A .netrc file where we tell the tool to use the user name daniel and password 123456 for the host user.example.com is as simple as this:

machine user.example.com login daniel password 123456

Those different instructions can also be written on the same single line, they don’t need to be separated by newlines like above.

Specification

There is no and has never been any standard or specification for the file format. If you google .netrc now, the best you get is a few different takes on man pages describing the format in a high level. In general this covers our needs and for most simple use cases this is good enough, but as always the devil is in the details.

The lack of detailed descriptions on how long lines or fields to accept, how to handle special character or white space for example have left the implementers of the different code basis to decide by themselves how to handle those things.

The horse left the barn

Since numerous different implementations have been done and have been running in systems for several decades already, it might be too late to do a spec now.

This is also why you will find man pages out there with conflicting information about the support for space in passwords for example. Some of them explicitly say that the file format does not support space in passwords.

Passwords

Most fields in the .netrc work fine even when not supporting special characters or white space, but in this age we have hopefully learned that we need long and complicated passwords and thus having “special characters” in there is now probably more common than back in the 1970s.

Writing a .netrc file with for example a double-quote or a white space in the password unfortunately breaks tools and is not portable.

I have found at least three different ways existing tools do the parsing, and they are all incompatible with each other.

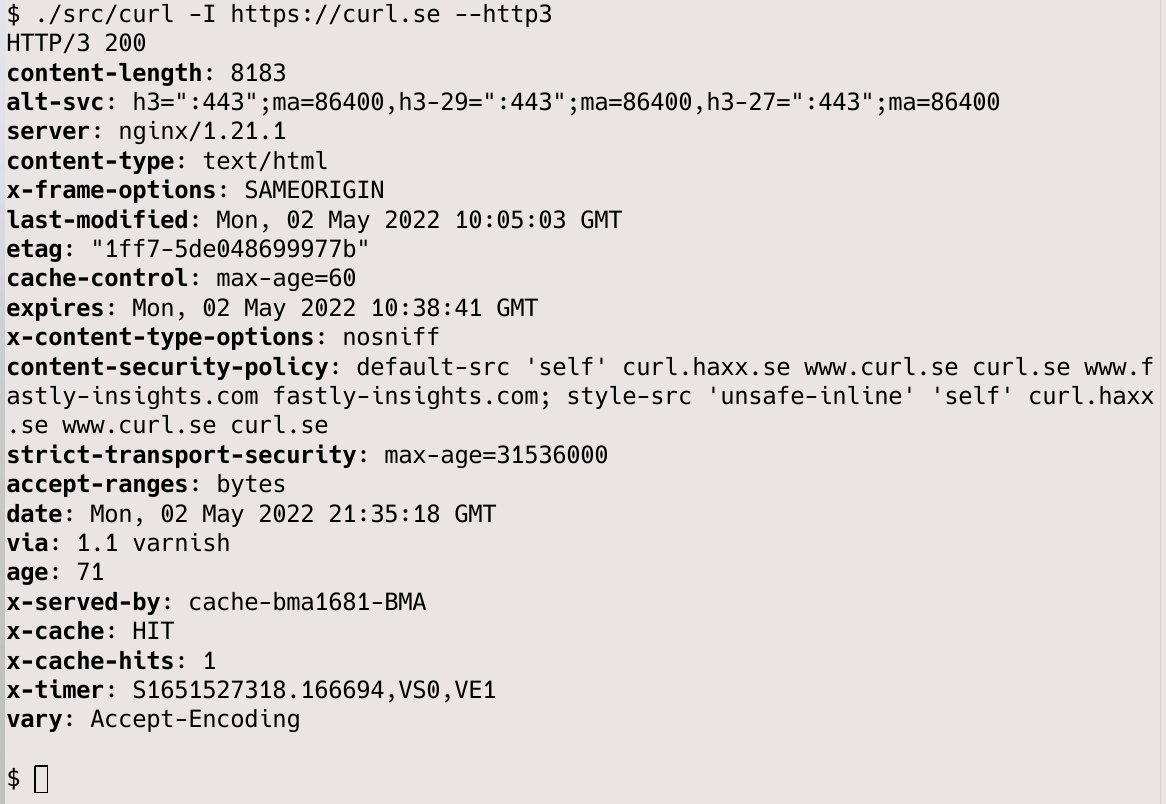

curl parser (before 7.84.0)

curl did not support spaces in passwords, period. The parser split all fields at the following space or newline and accepted whatever is in between. curl thus supported any characters you want, except space and newlines . It also did not “unquote” anything so if you wanted to provide a password like ""llo (with two leading double-quotes), you would use those five bytes verbatim in the file.

wget parser

This parser allows a space in the password if you provide it quoted within double-quotes and use a backslash in front of the space. To specify the same ""llo password mentioned above, you would have to write it as "\"\"llo".

fetchmail parser

Also supports spaces in passwords. Here the double-quote is a quote character itself so in order to provide a verbatim double-quote, it needs to be doubled. To specify the same ""llo password mentioned above, you would have to write it as """"llo – that is with four double-quotes.

What is the best way?

Changing any of these parsers in an effort to unify risk breaking existing use cases and scripts out in the wild with outraged users as a result. But a change could also generate a few happy users too who then could better share the same .netrc file between tools.

In my personal view, the wget parser approach seems to be the most user friendly one that works perhaps most closely to what I as a user would expect. So that’s how I went ahead and made curl work.

What to do

Users will of course be stuck with ancient versions for a long time and this incompatibility situation will remain for the foreseeable future. I can think of a few work-arounds users can do to cope:

- Avoid space, tabs, newline and various quotes in passwords

- Use separate .netrc files for separate tools

- Provide passwords using other means than .netrc – with curl you can for example explore using –config instead

Future curl supports quoting

We are changing the curl parser somewhat in the name of compatibility with other tools (read wget) and curl will allow quoted strings in the way wget does it, starting in curl 7.84.0. While this change risks breaking a few command lines out there (for users who have leading double-quotes in their existing passwords), I think the change is worth doing in the name of compatibility and the new ability to use spaces in passwords.

A little polish after twenty-four years of not supporting spaces in user names or passwords.

Hopefully this will not hurt too many users.

Credits

Image by Anja-#pray for ukraine# #helping hands# stop the war from Pixabay