This is not news. This is only facts that seem to still be unknown to many people so I just want to help out documenting this to help educate the world. I’ll dance around the subject first a bit by providing the full background info…

round robin basics

Round robin DNS has been the way since a long time back to get some rough and cheap load-balancing and spreading out visitors over multiple hosts when they try to use a single host/service with static content. By setting up an A entry in a DNS zone to resolve to multiple IP addresses, clients would get different results in a semi-random manner and thus hitting different servers at different times:

server IN A 192.168.0.1

server IN A 10.0.0.1

server IN A 127.0.0.1

For example, if you’re a small open source project it makes a perfect way to feature a distributed service that appears with a single name but is hosted by multiple distributed independent servers across the Internet. It is also used by high profile web servers, like for example www.google.com and www.yahoo.com.

host name resolving

If you’re an old-school hacker, if you learned to do socket and TCP/IP programming from the original Stevens’ books and if you were brought up on BSD unix you learned that you resolve host names with gethostbyname() and friends. This is a POSIX and single unix specification that’s been around since basically forever. When calling gethostbyname() on a given round robin host name, the function returns an array of addresses. That list of addresses will be in a seemingly random order. If an application just iterates over the list and connects to them in the order as received, the round robin concept works perfectly well.

but gethostbyname wasn’t good enough

gethostbyname() is really IPv4-focused. The mere whisper of IPv6 makes it break down and cry. It had to be replaced by something better. Enter getaddrinfo() also POSIX (and defined in RFC 3943 and again updated in RFC 5014). This is the modern function that supports IPv6 and more. It is the shiny thing the world needed!

not a drop-in replacement

So the (good parts of the) world replaced all calls to gethostbyname() with calls to getaddrinfo() and everything now supported IPv6 and things were all dandy and fine? Not exactly. Because there were subtleties involved. Like in which order these functions return addresses. In 2003 the IETF guys had shipped RFC 3484 detailing Default Address Selection for Internet Protocol version 6, and using that as guideline most (all?) implementations were now changed to return the list of addresses in that order. It would then become a list of hosts in “preferred” order. Suddenly applications would iterate over both IPv4 and IPv6 addresses and do it in an order that would be clever from an IPv6 upgrade-path perspective.

no round robin with getaddrinfo

So, back to the good old way to do round robin DNS: multiple addresses (be it IPv4 or IPv6 or both). With the new ideas of how to return addresses this load balancing way no longer works. Now getaddrinfo() returns basically the same order in every invoke. I noticed this back in 2005 and posted a question on the glibc hackers mailinglist: http://www.cygwin.com/ml/libc-alpha/2005-11/msg00028.html As you can see, my question was delightfully ignored and nobody ever responded. The order seems to be dictated mostly by the above mentioned RFCs and the local /etc/gai.conf file, but neither is helpful if getting decent round robin is your aim. Others have noticed this flaw as well and some have fought compassionately arguing that this is a bad thing, while of course there’s an opposite side with people claiming it is the right behavior and that doing round robin DNS like this was a bad idea to start with anyway. The impact on a large amount of common utilities is simply that when they go IPv6-enabled, they also at the same time go round-robin-DNS disabled.

no decent fix

Since getaddrinfo() now has worked like this for almost a decade, we can forget about “fixing” it. Since gai.conf needs local edits to provide a different function response it is not an answer. But perhaps worse is, since getaddrinfo() is now made to return the addresses in a sort of order of preference it is hard to “glue on” a layer on top that simple shuffles the returned results. Such a shuffle would need to take IP versions and more into account. And it would become application-specific and thus would have to be applied to one program at a time. The popular browsers seem less affected by this getaddrinfo drawback. My guess is that because they’ve already worked on making asynchronous name resolves so that name resolving doesn’t lock up their processes, they have taken different approaches and thus have their own code for this. In curl’s case, it can be built with c-ares as a resolver backend even when supporting IPv6, and c-ares does not offer the sort feature of getaddrinfo and thus in these cases curl will work with round robin DNSes much more like it did when it used gethostbyname.

alternatives

The downside with all alternatives I’m aware of is that they aren’t just taking advantage of plain DNS. In order to duck for the problems I’ve mentioned, you can instead tweak your DNS server to respond differently to different users. That way you can either just randomly respond different addresses in a round robin fashion, or you can try to make it more clever by things such as PowerDNS’s geobackend feature. Of course we all know that A) geoip is crude and often wrong and B) your real-world geography does not match your network topology.

happy eyeballs

During this period, another connection related issue has surfaced. The fact that IPv6 connections are often handled as a second option in dual-stacked machines, and the fact is that IPv6 is mostly present in dual stacks these days. This sadly punishes early adopters of IPv6 (yes, they unfortunately IPv6 must still be considered early) since those services will then be slower than the older IPv4-only ones.

There seems to be a general consensus on what the way to overcome this problem is: the Happy Eyeballs approach. In short (and simplified) it recommends that we try both (or all) options at once, and the fastest to respond wins and gets to be used. This requires that we resolve A and AAAA names at once, and if we get responses to both, we connect() to both the IPv4 and IPv6 addresses and see which one is the fastest to connect.

This of course is not just a matter of replacing a function or two anymore. To implement this approach you need to do something completely new. Like for example just doing getaddrinfo() + looping over addresses and try connect() won’t at all work. You would basically either start two threads and do the IPv4-only route in one and do the IPv6 route in the other, or you would have to issue non-blocking resolver calls to do A and AAAA resolves in parallel in the same thread and when the first response arrives you fire off a non-blocking connect() …

My point being that introducing Happy Eyeballs in your good old socket app will require some rather major remodeling no matter what. Doing this will most likely also affect how your application handles with round robin DNS so now you have a chance to reconsider your choices and code!

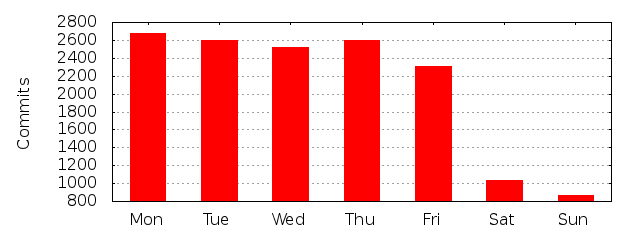

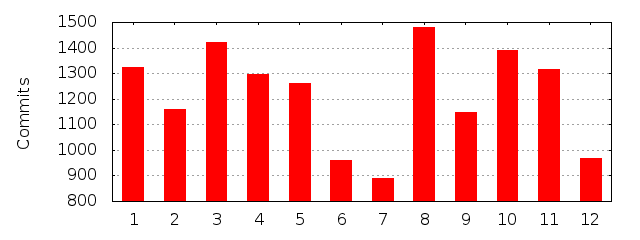

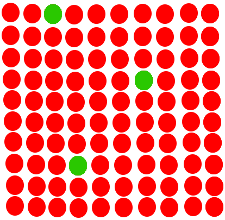

I’ve been an open source and free software hacker, contributor and maintainer for almost 20 years. I’m the perfect stereo-type too: a white, hetero, 40+ years old male living in a suburb of a west European city. (I just lack a beard.) I’ve done more than 20,000 commits in public open source code repositories. In the projects I maintain, and have a leading role in, and for the sake of this argument I’ll limit the discussion to

I’ve been an open source and free software hacker, contributor and maintainer for almost 20 years. I’m the perfect stereo-type too: a white, hetero, 40+ years old male living in a suburb of a west European city. (I just lack a beard.) I’ve done more than 20,000 commits in public open source code repositories. In the projects I maintain, and have a leading role in, and for the sake of this argument I’ll limit the discussion to