Together with Bountygraph, the curl project now offers money to security researchers for report security vulnerabilities to us.

https://bountygraph.com/programs/curl

The idea is that sponsors donate money to the bounty fund, and we will use that fund to hand out rewards for reported issues. It is a way for the curl project to help compensate researchers for the time and effort they spend helping us improving our security.

Right now the bounty fund is very small as we just started this project, but hopefully we can get a few sponsors interested and soon offer “proper” rewards at decent levels in case serious flaws are detected and reported here.

If you’re a company using curl or libcurl and value security, you know what you can do…

Already before, people who reported security problems could ask for money from Hackerone’s IBB program, and this new program is in addition to that – even though you won’t be able to receive money from both bounties for the same issue.

After I announced this program on twitter yesterday, I did an interview with Arif Khan for latesthackingnews.com. Here’s what I had to say:

A few questions

Q: You have launched a self-managed bug bounty program for the first time. Earlier, IBB used to pay out for most security issues in libcurl. How do you think the idea of self-management of a bug bounty program, which has some obvious problems such as active funding might eventually succeed?

First, this bounty program is run on bountygraph.com so I wouldn’t call it “self-managed” since we’re standing on a lot of infra setup and handled by others.

To me, this is an attempt to make a bounty program that is more visible as clearly a curl bounty program. I love Hackerone and the IBB program for what they offer, but it is A) very generic, so the fact that you can get money for curl flaws there is not easy to figure out and there’s no obvious way for companies to sponsor curl security research and B) they are very picky to which flaws they pay money for (“only critical flaws”) and I hope this program can be a little more accommodating – assuming we get sponsors of course.

Will it work and make any differences compared to IBB? I don’t know. We will just have to see how it plays out.

Q: How do you think the crowdsourcing model is going to help this bug bounty program?

It’s crucial. If nobody sponsors this program, there will be no money to do payouts with and without payouts there are no bounties. Then I’d call the curl bounty program a failure. But we’re also not in a hurry. We can give this some time to see how it works out.

My hope is though that because curl is such a widely used component, we will get sponsors interested in helping out.

Q: What would be the maximum reward for most critical a.k.a. P0 security vulnerabilities for this program?

Right now we have a total of 500 USD to hand out. If you report a p0 bug now, I suppose you’ll get that. If we just get sponsors, I’m hoping we should be able to raise that reward level significantly. I might be very naive, but I think we won’t have to pay for very many critical flaws.

It goes back to the previous question: this model will only work if we get sponsors.

Q: Do you feel there’s a risk that bounty hunters could turn malicious?

I don’t think this bounty program particularly increases or reduces that risk to any significant degree. Malicious hunters probably already exist and I would assume that blackhat researchers might be able to extract more money on the less righteous markets if they’re so inclined. I don’t think we can “outbid” such buyers with this program.

Q: How will this new program mutually benefit security researchers as well as the open source community around curl as a whole?

Again, assuming that this works out…

Researchers can get compensated for the time and efforts they spend helping the curl project to produce and provide a more secure product to the world.

curl is used by virtually every connected device in the world in one way or another, affecting every human in the connected world on a daily basis. By making sure curl is secure we keep users safe; users of countless devices, applications and networked infrastructure.

Update: just hours after this blog post, Dropbox chipped in 32,768 USD to the curl bounty fund…

It’s been

It’s been  I had already before I joined the Firefox development understood some of the challenges of making a browser in the modern era, but that understanding has now been properly enriched with lots of hands-on and code-digging in sometimes decades-old messy C++, a spaghetti armada of threads and the wild wild west of users on the Internet.

I had already before I joined the Firefox development understood some of the challenges of making a browser in the modern era, but that understanding has now been properly enriched with lots of hands-on and code-digging in sometimes decades-old messy C++, a spaghetti armada of threads and the wild wild west of users on the Internet. I had worked on curl for a very long time already before joining Mozilla and I expect to keep doing curl and other open source things even going forward. I don’t think my choice of future employer should have to affect that negatively too much, except of course in periods.

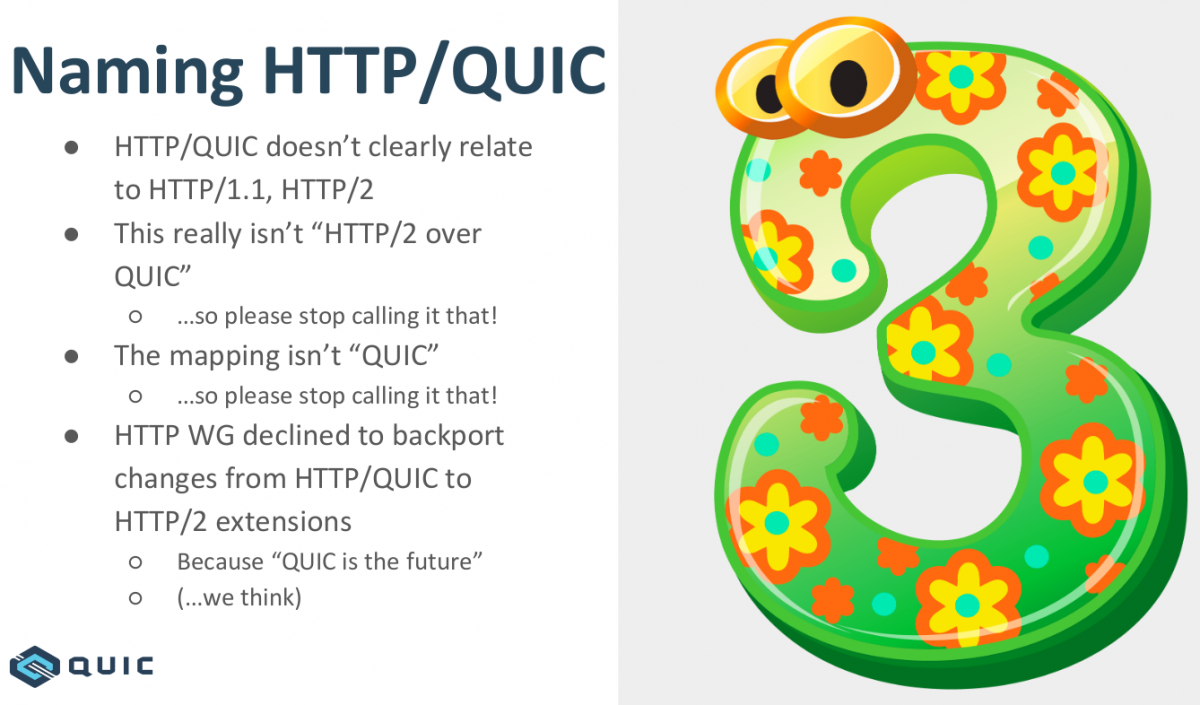

I had worked on curl for a very long time already before joining Mozilla and I expect to keep doing curl and other open source things even going forward. I don’t think my choice of future employer should have to affect that negatively too much, except of course in periods. I was involved in the IETF HTTPbis working group for many years before I joined Mozilla (for over ten years now!) and I hope to be involved for many years still. I still have a lot of things I want to do with curl and to keep curl the champion of its class I need to stay on top of the game.

I was involved in the IETF HTTPbis working group for many years before I joined Mozilla (for over ten years now!) and I hope to be involved for many years still. I still have a lot of things I want to do with curl and to keep curl the champion of its class I need to stay on top of the game.