I got myself a new 27″ 4K screen to my work setup, a Dell P2715Q, and replaced one of my old trusty twenty-four inch friends with it.

I now work with the “Thinkpad 13″ on the left as my video conference machine (it does nothing else and it runs Windows!), the two mid screens are a 24″ and the new 27” and they are connected to my primary dev machine while the rightmost thing is my laptop for when I need to move.

Did everything run smoothly? Heck no.

When I first inserted the 4K screen without modifying anything else in the setup, it was immediately obvious that I really needed to upgrade my graphics card since it didn’t have muscles enough to drive the screen at 4K so the screen would then instead upscale a 1920×1200 image in a slightly blurry fashion. I couldn’t have that!

New graphics card

So when I was out and about later that day I more or less accidentally passed a Webhallen store, and I got myself a new card. I wanted to play it easy so I stayed with an AMD processor and went with ASUS Dual-Rx460-O2G. The key feature I wanted was to be able to drive one 4K screen and one at 1920×1200, and then I unfortunately had to give up on the ones with only passive cooling and I instead had to pick what sounds like a gaming card. (I hate shopping graphics cards.) As I was about to do surgery on the machine anyway. I checked and noticed that I could add more memory to the motherboard so I bought 16 more GB to a total of 32GB.

As I was about to do surgery on the machine anyway. I checked and noticed that I could add more memory to the motherboard so I bought 16 more GB to a total of 32GB.

Blow some fuses

Later that night, when the house was quiet and dark I shut down my machine, inserted the new card, the new memory DIMMs and powered it back up again.

At least that was the plan. When I fired it back on, it said clock and my lamps around me all got dark and the machine didn’t light up at all. The fuse was blown! Man, wasn’t that totally unexpected?

I did some further research on what exactly caused the fuse to blow and blew a few more in the process, as I finally restored the former card and removed the memory DIMMs again and it still blew the fuse. Puzzled and slightly disappointed I went to bed when I had no more spare fuses.

I hate leaving the machine dead in parts on the floor with an uncertain future, but what could I do?

A new PSU

Tuesday morning I went to get myself a PSU replacement (Plexgear PS-600 Bronze), and once I had that installed no more fuses blew and I could start the machine again!

I put the new memory back in and I could get into the BIOS config with both screens working with the new card (and it detected 32GB ram just fine). But as soon as I tried to boot Linux, the boot process halted after just 3-4 seconds and seemingly just froze. Hm, I tested a few different kernels and safety mode etc but they all acted like that. Weird!

I put the new memory back in and I could get into the BIOS config with both screens working with the new card (and it detected 32GB ram just fine). But as soon as I tried to boot Linux, the boot process halted after just 3-4 seconds and seemingly just froze. Hm, I tested a few different kernels and safety mode etc but they all acted like that. Weird!

BIOS update

A little googling on the messages that appeared just before it froze gave me the idea that maybe I should see if there’s an update for my bios available. After all, I’ve never upgraded it and it was a while since I got my motherboard (more than 4 years).

I found a much updated bios image on ASUS support site, put it on a FAT-formatted USB-drive and I upgraded.

Now it booted. Of course the error messages I had googled for are still present, and I suppose they were there before too, I just hadn’t put any attention to them when everything was working dandy!

Displayport vs HDMI

I had the wrong idea that I should use the display port to get 4K working, but it just wouldn’t work. DP + DVI just showed up on one screen and I even went as far as trying to download some Ubuntu Linux driver package for Radeon RX460 that I found, but of course it failed miserably due to my Debian Unstable having a totally different kernel running and what not.

In a slightly desperate move (I had now wasted quite a few hours on this and my machine still wasn’t working), I put back the old graphics card – (with DVI + hdmi) only to note that it no longer works like it did (the DVI one didn’t find the correct resolution anymore). Presumably the BIOS upgrade or something shook the balance?

Back on the new card I booted with DVI + HDMI, leaving DP entirely, and now suddenly both screens worked!

HiDPI + LoDPI

Once I had logged in, I could configure the 4K screen to show at its full 3840×2160 resolution glory. I was back.

Now I only had to start fiddling with getting the two screens to somehow co-exist next to each other, which is a challenge in its own. The large difference in DPI makes it hard to have one config that works across both screens. Like I usually have terminals on both screens – which font size should I use? And I put browser windows on both screens…

So far I’ve settled with increasing the font DPI in KDE and I use two different terminal profiles depending on which screen I put the terminal on. Seems to work okayish. Some texts on the 4K screen are still terribly small, so I guess it is good that I still have good eye sight!

24 + 27

So is it comfortable to combine a 24″ with a 27″ ? Sure, the size difference really isn’t that notable. The 27 one is really just a few centimeters taller and the differences in width isn’t an inconvenience. The photo below shows how similar they look, size-wise:

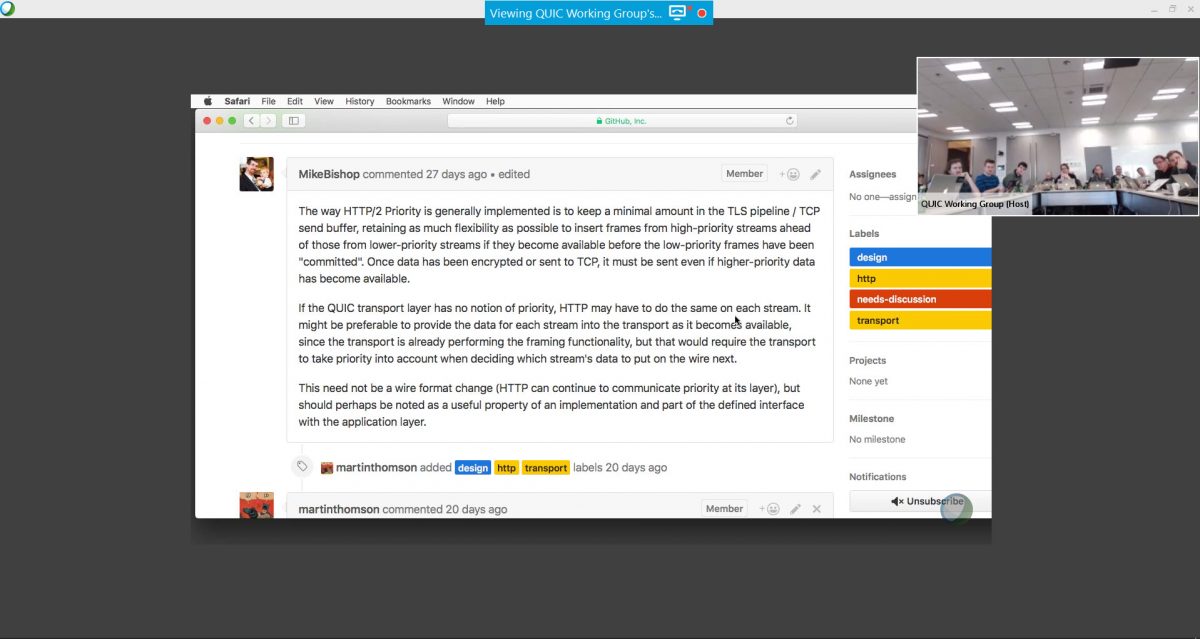

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer

QUIC is primarily designed to send and receive HTTP/2 frames and entire streams over UDP (not only, but this is where the bulk of the work has been put in so far). Sure, TLS encrypted and everything, but my point here is that it is being designed to transfer